Nature Report: Best AI Agents Still Score Half of Human Scientists — A Reality Check for the Agent Hype

Nature reports that top AI agents achieve only about 50% of PhD-level expert performance on complex scientific tasks, according to the Stanford AI Index 2026. Research also reveals a paradox: AI tools boost individual productivity while narrowing the scope of scientific inquiry.

50%. That's how well the best AI agents perform compared to human scientists on complex tasks.

According to a Nature report this week, the most capable AI agents available today achieve only about half the performance of PhD-level experts on complex scientific tasks. The source is the Stanford AI Index 2026 report — a 400-plus-page document released by Stanford HAI on April 13.

In an era when everyone's betting the farm on AI agents, this is a sobering dose of reality. The Nature summary puts it bluntly: "On tasks that require judgment, planning, and verification, agents are still playing junior varsity — they cannot reliably chain six steps together, cannot tell when they are wrong, and when they are wrong, they are confidently wrong in ways that waste a scientist's entire afternoon."

Why This Report Lands Now

2025 and 2026 have been the years of the agent. Anthropic's Claude Code, OpenAI's Codex, Cognition's Devin, and Google's Jules all shipped in rapid succession. Corporate AI investment doubled from $253 billion in 2024 to $581 billion in 2025, with a disproportionate share flowing into agent startups.

The prevailing narrative was that agents would soon replace 80% of white-collar work. The benchmark numbers seemed to support it. SWE-bench Verified (software engineering) climbed from 60% to nearly 100%. OSWorld (computer-use automation) jumped from 12% to 66%. Humanity's Last Exam moved from OpenAI o1's 8.8% in 2025 to over 50% in 2026.

The Stanford AI Index 2026 is the scientific microscope applied to that marketing narrative. Single-benchmark scores look explosive, but when researchers stitched together the kind of multi-step workflow that mirrors real science — plan, run, verify, adjust — the gap to human experts remained wide.

Source: commons.wikimedia.org · CC-BY-SA 3.0

Source: commons.wikimedia.org · CC-BY-SA 3.0

Method Breakdown — What Got Measured, and How

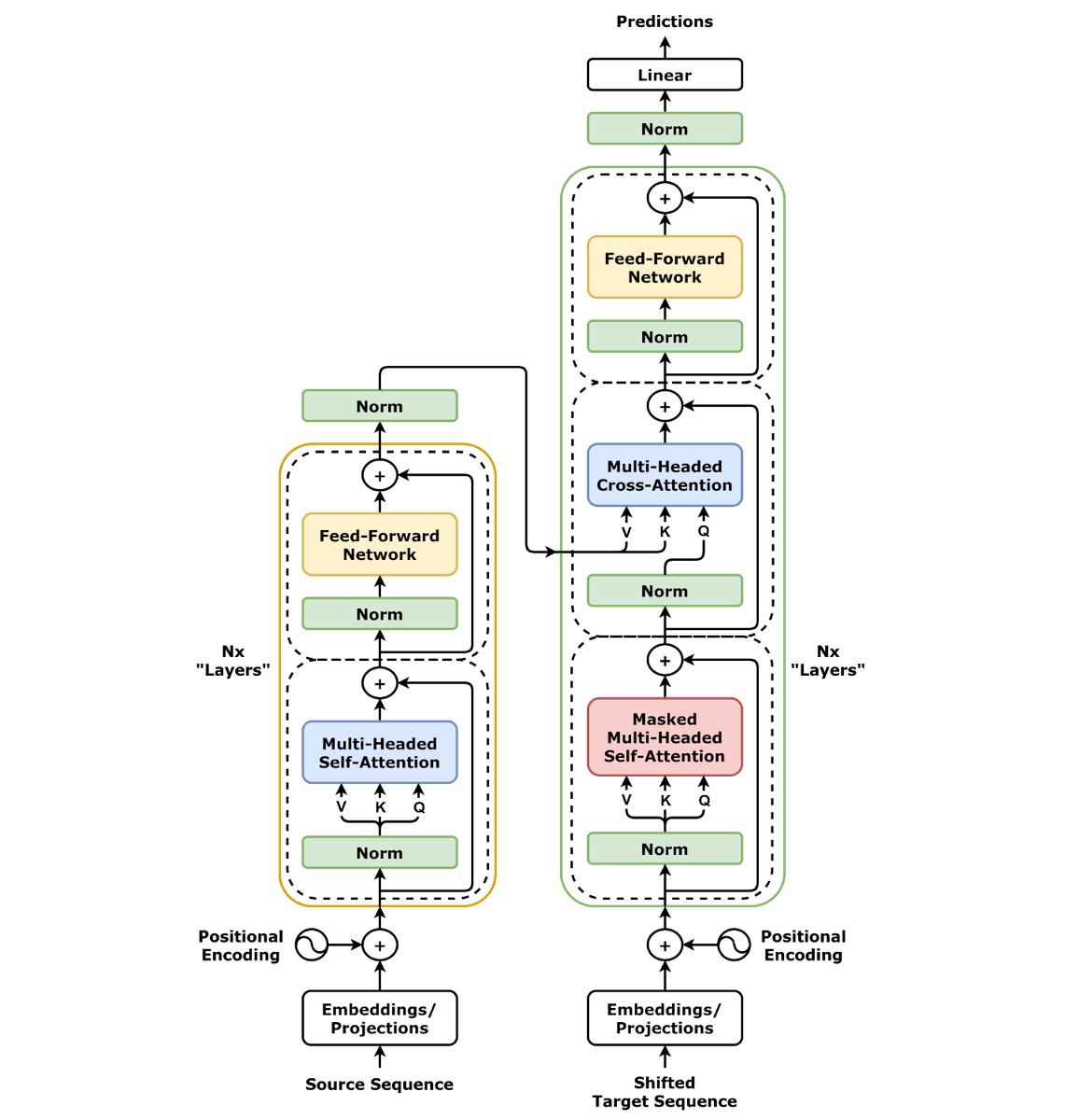

The AI Index 2026 cross-measures agent performance across multiple benchmarks because chatbots and agents are fundamentally different systems that need different metrics.

| Feature | Chatbot | AI Agent |

|---|---|---|

| Interaction | Single Q&A | Multi-step autonomous execution |

| Tool use | Limited | Code execution, API calls, file manipulation |

| Planning | None | Goal decomposition and step-by-step execution |

| Examples | ChatGPT (basic), Claude (basic) | Claude Code, Devin, OpenAI Codex |

The report leans on several headline benchmarks. Humanity's Last Exam (HLE) is an extremely hard question set authored by top domain experts, meant to probe PhD-level reasoning. OSWorld tests agents running real computer tasks inside real operating systems. SWE-bench Verified measures software-engineering problem-solving on real GitHub issues. ClockBench checks a deceptively basic perceptual task: reading analog clocks.

The interesting finding is that agent performance diverges wildly between these benchmarks. On some tasks agents surpass humans, on others they collapse. Researchers call it the "jagged frontier" — the capability surface has sharp peaks next to deep valleys, with no smooth gradient between them.

Results — The Jagged Frontier in Numbers

Line up the Stanford AI Index 2026 benchmarks side by side and the pattern becomes stark. On single-step, well-defined tasks, models are catching and passing human performance fast. The moment multi-step judgment enters the picture, the numbers crater.

| Benchmark | What It Tests | 2025 SOTA | 2026 SOTA | Human Comparison |

|---|---|---|---|---|

| SWE-bench Verified | Software engineering | ~60% | near 100% | Exceeds many developers |

| OSWorld | OS-level automation | 12% | ~66% | Still 33% failure rate |

| Humanity's Last Exam | Multi-domain PhD reasoning | 8.8% (o1) | 50%+ | Half of PhD experts |

| ClockBench | Reading analog clocks | — | ~50% | Kindergarten task, coin-flip result |

| Complex science workflows | Experiment design/execution | — | ~50% | Half of PhD experts |

The headline message focuses on science-focused agents. When researchers put AI agents in charge of autonomously designing and running experiments, the best of them landed at roughly half of PhD-expert performance. The failure mode is specific: on tasks longer than six steps, agents don't notice mid-chain errors and stack confidently wrong answers on top of them.

On narrow, repetitive tasks the scores are remarkable. Cybersecurity triage reportedly climbed from 15% to 93% in the same window. The structural conclusion isn't "agents are bad" or "agents are good" — it's that agent capability is growing unevenly across domains.

Source: commons.wikimedia.org · CC-BY-SA 4.0

Source: commons.wikimedia.org · CC-BY-SA 4.0

Limitations + Criticism — Benchmarks Are Maps, Not Territory

A critical reading of this report matters too.

First, the "PhD-level" framing around Humanity's Last Exam is debatable. PhDs wrote the questions, but individual PhDs don't correctly answer problems outside their narrow specialty either. "Half of PhD performance" is really "half of the average across many specialities." Within-field comparisons would look very different, sometimes better, sometimes worse.

Second, agent benchmarks are still young. OSWorld, SWE-bench Verified, and PaperBench have existed for one or two years at most. Small tweaks in task setup shift scores dramatically. A 50% on a given benchmark today may not mean what "50%" will mean after the community settles on stable evaluation norms.

Third, Nature's companion study flags a different kind of risk. Scientists using AI tools produce more output individually but converge on narrower research topics. The tools nudge researchers toward problems where AI works well. AI is already mentioned in 6 to 9 percent of natural-science publications, and that convergence effect will compound.

AI tools are simultaneously boosting individual scientist productivity and narrowing the creative scope of science as a whole. It's paradoxical yet intuitive: when a tool makes a particular methodology easy, people converge on that methodology.

Field Context — The Short Lineage of Agent Benchmarks

Agent evaluation methodology has moved at unusual speed. The timeline below shows how quickly the centre of gravity shifted.

| Year | Flagship Benchmark | Character |

|---|---|---|

| 2019 | SuperGLUE | Natural language understanding, single-turn |

| 2021 | MMLU | 57-subject knowledge test, pre-PhD level |

| 2023 | HumanEval, GSM8K | Code and math reasoning, still single-task |

| 2024 | SWE-bench, GPQA | Real GitHub issues, graduate-level knowledge |

| 2025 | OSWorld, Humanity's Last Exam | Multi-step agent use, PhD-level questions |

| 2026 | AI Index framing | Autonomous execution of scientific workflows |

Between 2024 and 2025, the centre of mass moved from "can it answer?" to "can it complete the task?" The 2026 verdict: answer-accuracy rose fast, but completion rate on workflows longer than six steps is stalled.

Source: commons.wikimedia.org · CC-BY-SA 4.0

Source: commons.wikimedia.org · CC-BY-SA 4.0

The Bigger Picture — Reality-Checking the Agent Hype

"Agent" is the undisputed buzzword of 2026. Anthropic's Claude Code, OpenAI's Codex, Devin, and dozens of agent startups have launched this year. Venture capital is pouring in — US corporate AI investment alone hit $344 billion in 2025.

But what the Nature report reveals is that agent capabilities still fall significantly short of what the marketing promises. Agents excel at simple, repetitive tasks. For complex judgment, creative problem-solving, and multi-step reasoning, humans remain overwhelmingly superior. A domain expert catches a mid-chain mistake in 30 seconds; an agent piles three hours of work on top of a faulty assumption. That wasted afternoon is a real, measurable cost.

This doesn't mean agents are useless. It means expectations need calibrating. Benchmark scores are still climbing fast, but their transfer rate into real-world completion is lagging significantly.

What This Means for You

Four takeaways for developers and researchers.

First, AI agents work best as assistants, not replacements. Rather than delegating entire workflows, the most effective setup is automating the repetitive parts while humans handle complex judgment. For anything longer than six chained steps, insert forced human checkpoints — that's where agents silently stack confidently wrong answers.

Second, the "AI will take my job" fear is premature for complex knowledge work. Simple, repetitive work is a different story — that automation is already happening fast. "People who leverage AI well will outperform those who don't" is already reality. Separate those two dynamics when planning your skill investments.

Third, be aware of the "diversity trap" when using AI tools. If you only follow AI suggestions, your output converges toward the mean. Nature's companion research shows AI tools boost individual productivity while compressing collective diversity. Deliberately exploring directions the AI doesn't suggest could become a genuine competitive edge.

Fourth, study the evaluation frameworks themselves. Understanding how SWE-bench, OSWorld, and HLE are actually constructed is the only way to read vendor benchmark numbers correctly. "98% on X-bench" almost never means "98% of real tasks completed." That distinction is baseline literacy now.

References

- Human scientists trounce the best AI agents on complex tasks (Nature)

- AI tools boost individual scientists but could limit research as a whole (Nature)

- Stanford 2026 AI Index Report (Stanford HAI)

- Stanford's AI Index for 2026 Shows the State of AI (IEEE Spectrum)

- AI Agents Score Half as Well as PhDs on Real Work (Humai.blog)

- Want to understand the current state of AI? Check out these charts (MIT Technology Review)

출처

관련 기사

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.