NVIDIA GTC 2026: Agentic AI Takes Center Stage, Vera CPU Crushes the Bottleneck

GTC 2026 centers on agentic AI. As AI evolves from chatbots to autonomous agents, CPU becomes the new limiting factor

Hook: AI Finally Let Go of the Leash

The era of chatbots answering questions and stopping there is over. Now AI tackles jobs on its own.

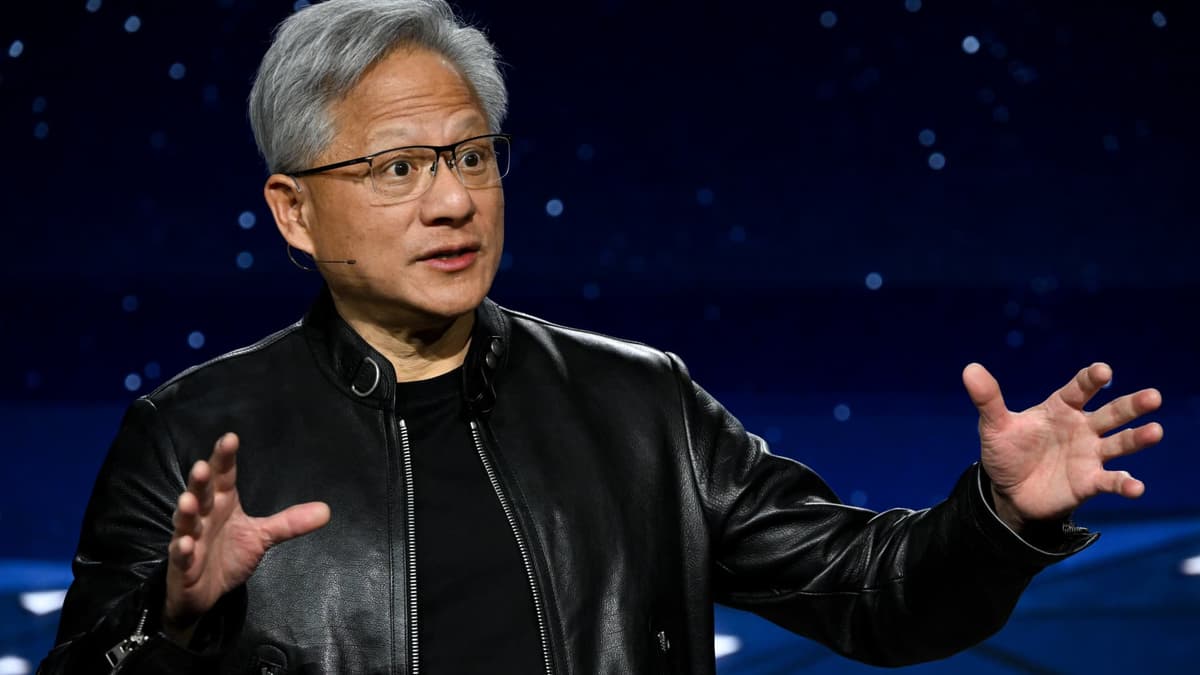

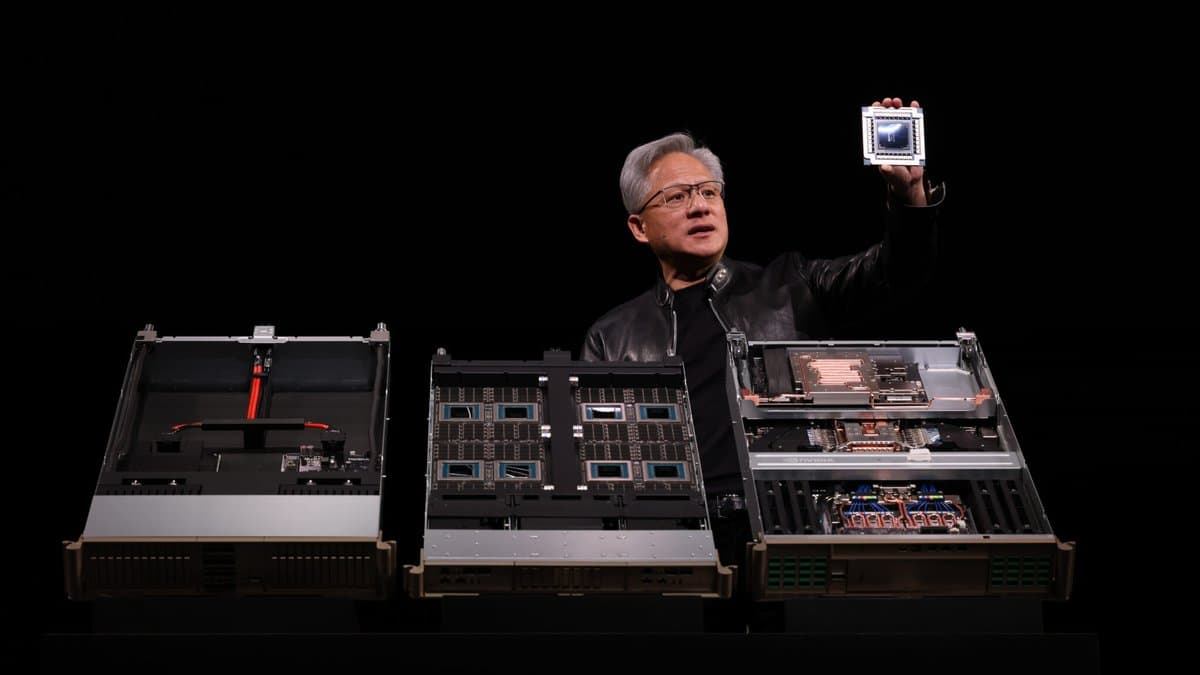

At NVIDIA GTC 2026, CEO Jensen Huang made a stunning announcement: agentic AI—AI that takes instructions and autonomously solves problems. And a new CPU to power it: Vera.

Here's the deal: for the past two years, GPUs dominated the LLM era. But agents are different. Thousands of tiny decisions, tool calls, and memory management happen continuously. The CPU orchestrating all this became the new bottleneck. NVIDIA finally admitted it—and is fixing it with their own CPU.

This is a paradigm shift for enterprise AI.

To Understand This: Why Agentic AI Now

From Chatbot to Agent: How AI Evolved

When GPT first arrived, AI was a "question answering machine." You asked, it answered. Done.

That's changing. Companies are now using AI for real work.

Imagine your accounting team says: "Build me a quarterly expense report." An agentic system works like this:

- Pulls transaction records from the database (tool call)

- Filters relevant expenses (logic)

- Calculates and builds charts (another tool)

- Formats it to spec

- Validates and sends to approvers (yet another tool)

This isn't "one answer." It's dozens of decisions chained together. Tool selection, error handling, memory reallocation—the CPU orchestrates all of it.

| Aspect | Chatbot Era (2023–2024) | Agent Era (2026+) |

|---|---|---|

| I/O Model | One question → one answer | One goal → many tool calls |

| Bottleneck | GPU (inference) | GPU + CPU (orchestration) |

| Latency | Seconds | Minutes |

| Memory | Simple | Complex (state, accumulation) |

| Failure Recovery | Ask again | Auto-retry, reroute |

Agents aren't just smarter chatbots. They need a fundamentally different architecture.

In 2024, companies tried building agents with LangChain or AutoGPT. In production, they crawled. Why? The CPU couldn't keep up.

NVIDIA's declaration—"We're building a CPU too"—signals agentic AI is officially here.

Dissecting the Details: Vera CPU and the Agent Platform

Vera CPU: What's Behind the Name

NVIDIA was a GPU company. Now they're making CPUs too. Seems odd—but the logic is airtight:

-

CPU-GPU Sync Problem: Enterprises used AMD or Intel CPUs paired with NVIDIA GPUs for agents. Data transfer between them bottlenecked. Memory buses became the limiting factor.

-

Unified Optimization: When NVIDIA controls both CPU and GPU, the entire stack (CPU + GPU + CUDA) optimizes as one. Way more efficient.

-

2026+ Necessity: Real-scale agent deployment needs more than a "fast" CPU. It needs a CPU built for agentic workloads. Vera is that.

Vera's key specs:

| Aspect | Details |

|---|---|

| Cores | 192 (power-efficient design) |

| Memory Bandwidth | 8TB+/sec (GPU sync optimized) |

| AI Operations | 256-bit native (Integer, FP32) |

| TDP | Under 300W (efficiency-first) |

| Workload Target | Agent orchestration, tool queuing, state management |

Vera isn't an "AI CPU." It's an "agent CPU." Single-thread performance matters less than agent runtime patterns—parallel tool calls, fast context switching, priority queue handling—all optimized.

Open Agents Platform: "Agents Must Be Open"

NVIDIA announced something else crucial: Open Agents Platform, an open-source framework.

This matters:

-

Standardization: Until now, developers built agents on different frameworks (LangChain, CrewAI, AutoGPT). No unified standard.

-

Portability: Build once on Open Agents Platform, run anywhere (AWS, Azure, on-prem).

-

NVIDIA's Angle: This platform reaches peak performance on Vera CPU + NVIDIA GPU. Other setups work, but optimization is NVIDIA's.

Open platforms don't beat NVIDIA—they make NVIDIA the standard.

The Bigger Picture: Agentic AI War Just Began

What's the Competition Doing

This isn't NVIDIA solo.

Google: Released NotebookLM agents last year, embedded agents into Gemini 2.0. No custom CPU, but TPUs improve constantly.

Microsoft: Partners with OpenAI on Copilot agents, pushing Copilot deployments on Azure. No custom CPU, but Maia chips (AMD partnership) aim to cut costs.

Meta: Open-sourced Llama agents. Less CPU optimization focus, more on framework.

AMD: Rumors of a Vera competitor. NVIDIA moved first, and the ecosystem already favors them.

2026 will be the watershed year for agentic AI standards. Whoever controls the platform controls cloud infra for the next decade.

Adobe Partnership: Creative Agents Are Coming

The most surprising GTC announcement was Adobe's strategic tie-up.

Adobe—Photoshop, Premiere maker. What's that got to do with agentic AI?

Most likely scenario:

"Tell the AI your creative idea. It autonomously orchestrates Photoshop, After Effects, and Stock libraries into a final product."

Example flow:

- Creator says: "Make 10 spring-themed social banners, here's the product"

- The agent:

- Finds background images in Stock library (tool call)

- Auto-layers in Photoshop (Adobe API)

- Auto-positions text, color, font

- Generates 10 variations in one shot

- Uploads to cloud

- Creator reviews, makes tweaks if needed

If this works, creative workflows transform overnight.

Adobe Creative Cloud has ~20 million paid users. If 10% adopt agent-first workflows, that's a massive agent market. And that agent runs on NVIDIA infrastructure.

So What Changes

How Developers Respond

Phase 1: Learn Agent Frameworks (Now)

- Read Open Agents Platform docs.

- Build a Python agent from scratch.

- Understand how it differs from chatbot code.

Phase 2: Vera Specs Deep Dive (Next 3–4 Months)

- When NVIDIA formally releases it.

- Understand how your agent runs optimally on Vera.

- Optimize memory layout, tool queue architecture.

Phase 3: Production Deployment (H2 2026–2027)

- Spin up Vera-based servers.

- Deploy agents on Open Agents Platform.

- Measure cost vs. performance.

Cost Implications

Current large LLM inference costs millions just for GPU. Add CPU—does cost rise or drop?

Optimistic: Vera streamlines agent orchestration, cutting costs 30–40% for the same work. Agents pick tools smarter, cutting unnecessary GPU ops.

Pessimistic: Vera adds cost. Existing AMD/Intel agents work fine, so unless cost advantage is clear, adoption lags.

Reality: Probably in between. Early costs rise, but 6–12 months in, economies of scale kick in.

Where the Money Is

-

Agent Development Firms: Open Agents Platform standardizes the field. Building enterprise-grade agents becomes an industry.

-

NVIDIA Ecosystem Players: Vera-based hosting, managed services. AWS, Azure prepping now.

-

Domain-Specific Agents: Healthcare (diagnostic support), finance (trade automation), legal (contract review). Real value here.

-

Creative Agents: Adobe partnership opens doors. Video production, graphic design, music generation automation.

Key Takeaways

Here's what you need to know:

- Agentic AI is real: Not hype. Companies are already deploying autonomous agents.

- CPU was bottlenecked: GPU excels at math, CPU excels at orchestration. Agents need both.

- Vera signals intent: NVIDIA building CPUs means they're betting everything on agent infra.

- Open Agents Platform = NVIDIA standard: Open doesn't mean vendor-neutral. It means a standard that NVIDIA leads.

- Adobe move is strategic: Creative AI agents unlock new market.

For developers: start learning agents now. The wave is coming.

Resources

관련 기사

Nvidia GTC 2026: Vera Rubin Unveiled — Complete Technical Breakdown

Reflection AI Eyes $25B Valuation — The Nvidia-Backed Code Automation Play

Mistral Borrows $830M to Buy 13,800 Nvidia Chips — Europe's AI Infrastructure Play

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.