Intel Arc Pro B70: 32GB VRAM for $949 — The Local LLM GPU That Changes the Math

Intel's Arc Pro B70 delivers 32GB GDDR6 VRAM at $949, undercutting Nvidia by half. First benchmarks show competitive LLM inference performance, but software support remains the critical question.

$949 for 32GB. That Changes Everything About Local AI

The biggest barrier to running AI on your own hardware is VRAM. A 70B parameter model needs at least 24GB at decent quantization. Comfortable headroom means 32GB. The problem is that 32GB GPUs barely exist, and when they do, they cost a fortune.

Nvidia's RTX 5090 offers 32GB at $1,999. The professional RTX 6000 Ada has 48GB for $6,800. Even a used RTX 3090 runs $800+.

Intel just dropped the Arc Pro B70: 32GB GDDR6, 608 GB/s bandwidth, $949. For local LLM inference, those numbers rewrite the value equation.

Why VRAM Matters More Than Compute for LLMs

When you run an LLM, the model's weights load into GPU memory. Bigger model, more VRAM needed. When VRAM runs short, you either quantize the model aggressively (losing quality) or offload parts to CPU RAM (killing speed).

Think of VRAM as desk space. If your book (model) is too big for the desk, you put pages on the floor and reach down every time you need them. Naturally, that's slow.

Here's the VRAM reality for popular open-source models in 2026:

| Model | Parameters | Q4 VRAM | Q8 VRAM |

|---|---|---|---|

| Llama 4 Scout | 109B (17B active) | ~12GB | ~22GB |

| Qwen 3.5 Medium | 27B | ~16GB | ~30GB |

| DeepSeek V3.2 | 671B MoE | ~48GB | N/A |

| Gemma 4 26B | 26B | ~15GB | ~28GB |

With 32GB, you can run 27B models at Q8 (high quality quantization) comfortably, and even load 109B MoE models at Q4. That's GPT-4-class responses on your own PC, no cloud API required.

Real-World Performance — The Numbers

Hardware Specs

The Arc Pro B70 is built on Intel's Battlemage architecture. It's not a gaming card — it's designed for professional and AI workloads.

| Spec | Arc Pro B70 | RTX 4090 | RTX 3090 |

|---|---|---|---|

| VRAM | 32GB GDDR6 | 24GB GDDR6X | 24GB GDDR6X |

| Memory bandwidth | 608 GB/s | 1,008 GB/s | 936 GB/s |

| FP32 performance | 22.9 TFLOPS | 82.6 TFLOPS | 35.6 TFLOPS |

| TDP | 150W | 450W | 350W |

| Price | $949 | $1,599 | $800 (used) |

On paper, Nvidia dominates. The B70's FP32 compute is a quarter of the RTX 4090, and memory bandwidth is 60% of Nvidia's best. But LLM inference tells a different story.

LLM inference is bandwidth-bound, not compute-bound. Token generation speed depends on how fast you can read model weights from memory. At 608 GB/s, the B70 delivers 60% of Nvidia's bandwidth — but its 32GB VRAM lets you load models that simply don't fit on Nvidia's 24GB cards.

In early benchmarks, the B70 runs Qwen 3.5-27B at Q8 quantization at roughly 18 tok/s. The RTX 3090 hits about 25 tok/s on the same model, but must drop to Q4. Quality-adjusted, the B70 is competitive.

The Software Problem

Here's the catch. Intel's AI software ecosystem is still shaky.

Intel archived its ipex-llm repository in January 2026, citing security issues. The replacement, llm-scaler, is a vLLM-based Docker solution that only recently added B70 support. Compared to Nvidia's CUDA ecosystem, where everything just works, Intel has a long road ahead.

The r/LocalLLaMA community captured this tension perfectly. A B70 discussion thread hit 213 upvotes and 133 comments. The positive consensus: "32GB at $949 is revolutionary." The negative consensus: "software support is too weak."

The Bigger Picture — Three-Way GPU War for Local AI

The 2026 local LLM GPU market is a three-horse race. Nvidia dominates with CUDA. AMD is chasing with ROCm. Intel is playing price disruptor.

Nvidia's moat is CUDA. Nearly every AI framework supports CUDA natively, and llama.cpp runs best on Nvidia hardware. The RTX 5090 matches the B70's 32GB at $1,999, but it's perpetually out of stock.

AMD recently launched the AI 395 series with a monstrous 128GB HBM, but at $3,000+. ROCm compatibility keeps improving but still trails CUDA in reliability.

Intel's strategy is clear: win on VRAM-per-dollar and bet that community support follows. If the price advantage is overwhelming enough, developers will build the software ecosystem themselves.

$949 for 32GB. What the Intel B70 really says is that local AI isn't an early adopter hobby anymore — it's becoming economically rational.

What This Means for You

If you're interested in local LLM development, the B70 deserves serious consideration for specific use cases.

Privacy-sensitive workloads top the list. Medical data, legal documents, company secrets — when you can't send data to a cloud API, the B70 lets you run 27B-class models entirely locally.

Cost optimization is another angle. If you're spending $200+ monthly on API calls, a B70 pays for itself in four to five months.

For experimentation and learning, 32GB provides comfortable headroom to test and fine-tune various open-source models without constant VRAM management.

The practical advice: unless you need it immediately, consider waiting one to two months for the software ecosystem to stabilize. llama.cpp's Intel support is improving rapidly, and community drivers are evolving fast.

Sources

- Intel Arc Pro B70 and B65 GPUs – Tom's Hardware

- Intel's $949 GPU has 32GB of VRAM – XDA Developers

- Intel B70 GPU: first benchmarks – Hardware Corner

관련 기사

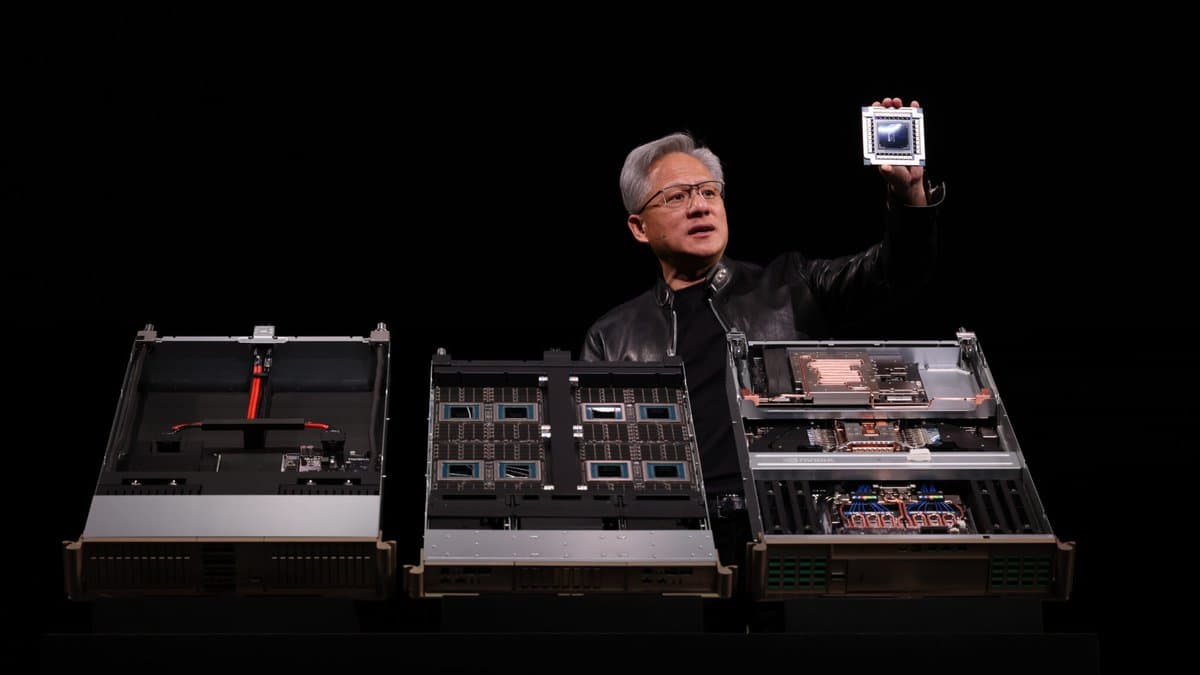

Nvidia GTC 2026: Vera Rubin Unveiled — Complete Technical Breakdown

NVIDIA GTC 2026: $1 Trillion in Orders and Why AI Infrastructure Demand Won't Stop

42.5 ExaFLOPS: Google's Ironwood TPU Rewrites the Inference Playbook

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.