The AI Chip Supply War — Tesla, ASML, Huawei, FluidStack Moved in One Week

Tesla AI5 tape-out, ASML's Q1 2026 guidance raise, Huawei 950PR mass orders, FluidStack's $18B datacenter plan — four signals in one week saying the real AI bottleneck isn't models anymore. It's silicon.

Four Chip Industry Signals in Four Days

This week the AI industry confirmed, four days in a row, that chips — not models — are the real bottleneck.

Monday: ASML raised its 2026 revenue guidance to €38B–€42B. EUV tool orders came in far heavier than expected. Same day, Reuters reported Huawei 950PR chips winning large orders from ByteDance and Alibaba. Wednesday: FluidStack closed a $1B Series C and publicly committed to an $18B datacenter pipeline. Thursday: Electrek broke the Tesla AI5 tape-out story, confirmed dual-sourcing across TSMC Arizona and Samsung Texas.

Taken separately, these are ordinary industry headlines. Taken together, they tell a single story: in 2026, silicon — not models — is the constraint.

The Backstory

In 2023, the AI bottleneck conversation was about data. "Without quality training data, you can't scale." In 2024, it was about models themselves — "who ships GPT-4's successor first?"

From 2025 onward, the bottleneck moved fast.

| Year | Main Bottleneck | Key Players |

|---|---|---|

| 2022 | Parameter scale | OpenAI, Google |

| 2023 | Training data | Scale AI, Anthropic |

| 2024 | Inference optimization | Together, Groq |

| 2025 | Power & cooling | Equinix, Digital Realty |

| 2026 | Chip supply | TSMC, ASML, Huawei |

By 2026, the diagnosis is clear: there aren't enough GPUs. NVIDIA allocations stretch 18 months out. H200s are fully sold through 2027. Even when you get an allocation, HBM (high-bandwidth memory) shortages and packaging constraints push actual delivery further.

Four strategies emerged in response. Build your own chips to reduce NVIDIA dependence (Tesla, Apple, Anthropic–Broadcom). Build a sovereign stack that bypasses sanctions (Huawei 950PR, Hua Hong). Hoard GPUs in hyperscale datacenters (FluidStack, CoreWeave). Expand manufacturing capacity itself (ASML EUV).

This week, all four strategies made news.

Breaking It Down

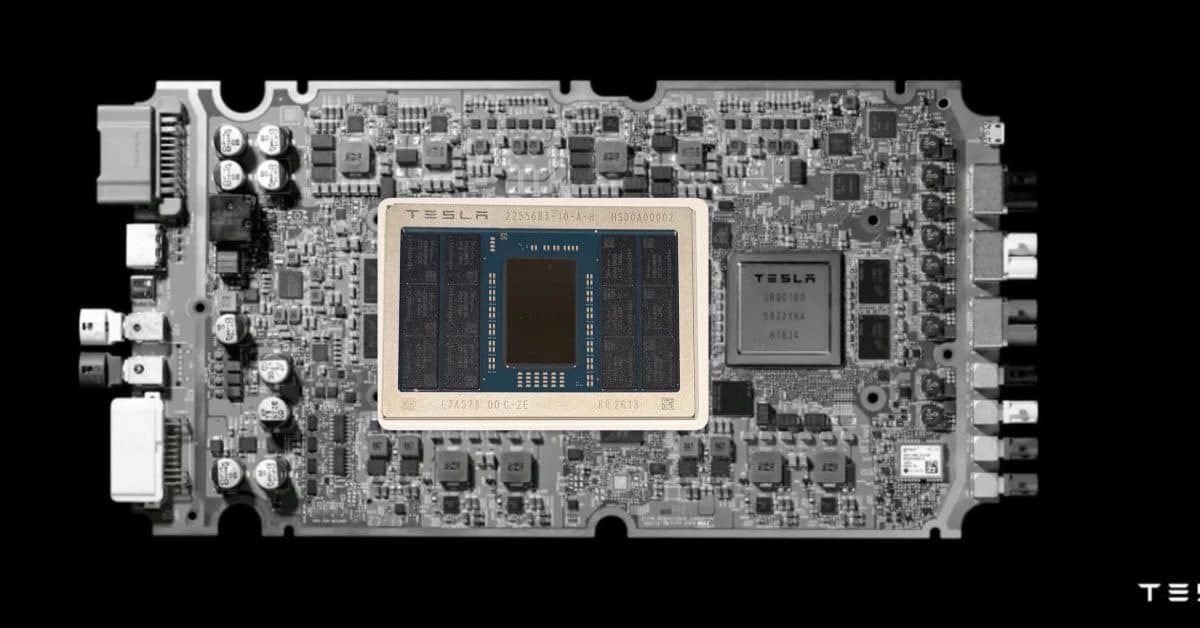

Tesla AI5 — 192GB LPDDR5X on a Single Chip

Tesla AI5's specs push it beyond "automotive chip" into full-stack AI inference territory.

Single-chip inference performance roughly equivalent to an H100. 192GB LPDDR5X memory. 3nm process. And — most notably — dual-sourcing between TSMC Arizona and Samsung Texas. That combination means Tesla can use AI5 not just for FSD but for xAI's Grok training and inference workloads.

Musk also confirmed AI6 and Dojo 3 on the roadmap. AI6 targets 2028 production with HBM4 memory. Dojo 3 is a full proprietary cluster architecture. Tesla is leapfrogging straight from AI5 mass production in 2027 to next-gen within a year.

| Spec | AI4 (2024) | AI5 (2027 target) |

|---|---|---|

| Process | 7nm | 3nm |

| Memory | 32GB LPDDR4X | 192GB LPDDR5X |

| FP16 performance | 37 TFLOPS | ~1,500 TFLOPS (est.) |

| Power | 40W | 400W |

| Fabrication | TSMC only | TSMC + Samsung dual |

Dual-sourcing matters for two reasons. It reduces single-fab risk. And it gives Samsung Foundry its first major AI customer win — a signal that Samsung's 3nm line, long seen as trailing TSMC, is now narrowing the gap on AI-specific workloads.

ASML Guidance Raise — Tools Are Selling Faster

ASML's Q1 2026 earnings bumped annual guidance from €36B–€40B to €38B–€42B. Roughly €4B more in EUV tool orders than analysts expected.

ASML's customers are just four names: TSMC, Samsung, Intel, SK hynix. When those four buy more tools, AI chip production lines are expanding — full stop.

EUV tools have 18–24 month lead times. Machines ordered now come online in 2028. ASML's bookings are a leading indicator for 2028 chip supply. This guidance raise is the industry saying AI infrastructure demand holds through 2028 at minimum.

Huawei 950PR — China's Sovereign Stack

Huawei 950PR landed major orders from ByteDance and Alibaba. Specific numbers weren't disclosed, but analysts peg the combined deal at ~500,000 units and ~$9B in total contract value.

950PR delivers roughly 70% of H100 inference performance at a 40% lower price point — and, crucially, it's outside US export controls. ByteDance is deploying it for TikTok and Douyin recommendation AI. Alibaba is slotting it into Qwen model training and Alimama ad optimization.

IDC released a parallel report showing Huawei's share of China's AI training GPU market jumped from 22% in 2025 to 41% in Q1 2026. China's decoupling from NVIDIA is measurable now.

FluidStack's $18B Datacenter — The Stacking Game

FluidStack closed a $1B Series C (Meta is a participant) and announced an $18B datacenter pipeline.

The key framing: FluidStack is a "neocloud" — a datacenter purpose-built for AI training and inference, not a general-purpose public cloud. 500MW per site, 20 sites, full buildout by 2028.

With CoreWeave, Crusoe, Lambda Labs, and now FluidStack, the top four neoclouds' combined 2028 CAPEX exceeds $220B. That number surpasses the combined AI infrastructure spend of the three legacy hyperscalers (AWS, Azure, GCP). A new tier of the cloud market has fully emerged.

The Bigger Picture

Chain these four signals together and the global AI chip supply chain is splitting into three geopolitical blocs.

The US bloc: NVIDIA, AMD, Intel, plus hyperscalers with proprietary silicon (Anthropic–Broadcom, Google TPU, Tesla AI5). Europe: wired around ASML's monopoly on lithography (80%+ market share). China: Huawei 950PR and Hua Hong building a domestic stack that serves the internal market.

The blocs aren't fully sealed. ASML sells to both US and China. TSMC fabs for NVIDIA, Tesla, Apple, and some Chinese customers. But the directional trend is unmistakable. Late-2020s AI infrastructure is splitting along geopolitical lines.

And that flows back into model development. Tesla training Grok on AI5 will need custom optimizations incompatible with NVIDIA's ecosystem. Qwen running on Huawei 950PR needs a stack outside CUDA. "Just build a great model" is no longer the whole job — "which silicon on which runtime" now determines real-world performance.

What Actually Changes

For developers and enterprises, the concrete shifts this week:

One: cloud choice got more complex. Comparing AWS, Azure, GCP used to be sufficient. Now CoreWeave, FluidStack, and Crusoe sell AI-optimized GPU hours at 40–60% discounts. For large training jobs, these are serious options — the cost gap can reach tens of millions of dollars.

Two: model portability costs are back. PyTorch and JAX used to cover most cases. Now the chip you target influences architecture decisions. Huawei MindSpore, Tesla's proprietary runtime, Google's JAX-on-TPU — each has different optimization philosophies, and porting costs 6–12 months of engineering time.

Three: investors should rebalance. AI model-layer valuations (OpenAI, Anthropic) have already crossed $500B in aggregate. The companies actually running AI — chips, power, cooling — are relatively underpriced. Applied Materials, Lam Research, Arista Networks — expect these names in more headlines a year from now.

Sources

출처

관련 기사

Tesla AI5 Chip Tapes Out – H100-Class Performance, TSMC+Samsung Dual Sourcing

Huawei's 950PR Is China's Bet on Inference-Only Silicon

Huawei Ascend 950PR — 2.8x H20 FP4, and ByteDance + Alibaba Are Already Stockpiling It

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.