Tesla AI5 Chip Tapes Out – H100-Class Performance, TSMC+Samsung Dual Sourcing

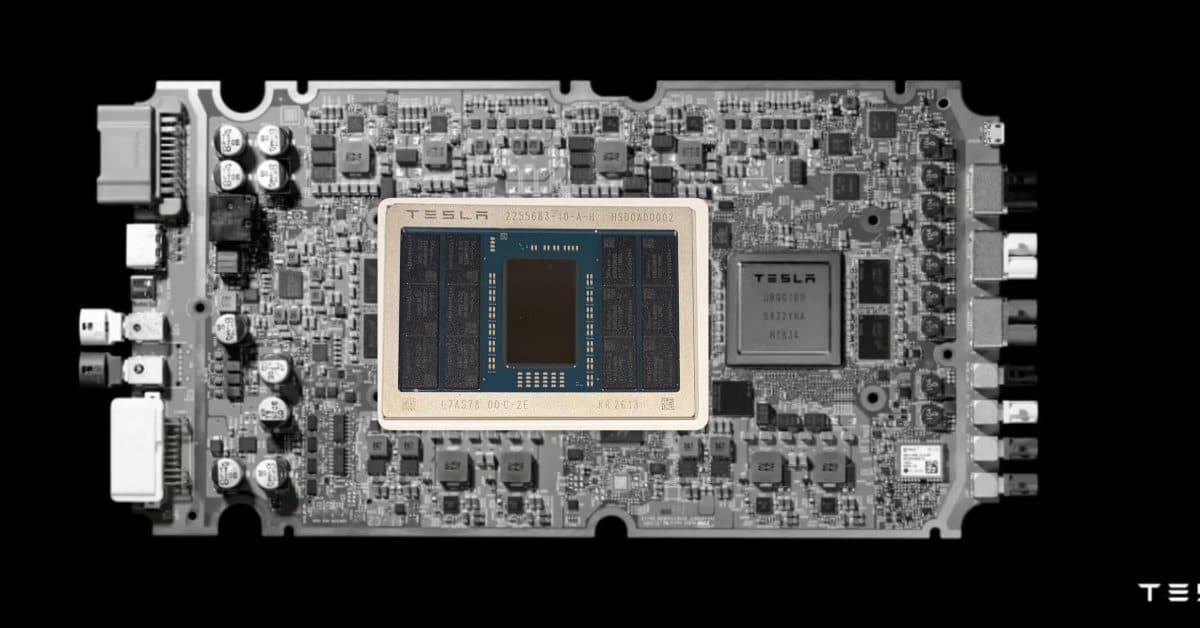

Tesla completed tape-out of the AI5 chip. 192GB LPDDR5X, single-chip inference on par with Nvidia's H100. Dual-sourced at TSMC Arizona and Samsung Taylor – a US-only production strategy. Musk claims it'll be 'the most-produced chip in history.'

40x — the number Musk put out, and why the timing matters

Here's the deal – Tesla just confirmed that the AI5 chip has completed tape-out. If you care about self-driving, humanoid robots, or AI training infrastructure, this matters more than almost anything else that happened this week. "Tape-out" means the design is frozen and sent to the fab. From here it's real silicon, not slides. And Tesla is doing something almost nobody else in the industry attempts at this scale – they're producing the same chip at two different foundries, on two different U.S. sites, at the same time.

The AI5 isn't just another incremental bump. Elon Musk is publicly claiming 40x the AI performance of the AI4 (the current Hardware 4 chip shipping in Model S/X/3/Y and Cybertruck), with a massive 192GB of LPDDR5X memory. The production plan is split between TSMC's Arizona fab and Samsung's Taylor, Texas fab. That means Tesla is building a fully domestic U.S. semiconductor supply chain for its entire AI stack – Full Self-Driving, Optimus, and the Dojo training cluster. This is a very different story from "Tesla makes cars."

To understand this, you need a bit of context

Source: commons.wikimedia.org · CC BY-SA 4.0

Source: commons.wikimedia.org · CC BY-SA 4.0

Tesla's "AI-grade chip" journey has been surprisingly long. In 2019 the company replaced Nvidia's Drive PX2 with its first in-house design, HW3, and built chips specifically to run the FSD neural nets. HW4 followed in 2023, roughly 3-5x more capable. But as FSD v12 shipped as an end-to-end neural network and v13, v14 became even more parameter-heavy, Tesla kept running into the same wall – the chip sitting in the car was the bottleneck.

The AI5 is the answer to that wall. Early roadmap slides put it at around a 10x jump over AI4. 40x is dramatically larger than the roadmap suggested. If the claim holds, Tesla isn't just pushing for better FSD performance – they're embedding large neural networks directly on the vehicle, potentially running something closer to an onboard LLM that interprets scenes in real time and makes decisions.

The other headline is the 192GB of LPDDR5X memory. Automotive chips almost never ship with this much memory. Nvidia's Drive Thor tops out around 64GB. Mobileye's EyeQ7 is in the tens-of-GB range. What does 192GB buy you? It means the car can hold huge models and long context windows in local memory, plus frame buffers, map data, and maybe even a shared memory pool for Optimus – all on the same SoC. This is an architecture designed to serve more than just a car.

Taking the deal apart

Dual foundry — hedging against any single point of failure

Let's go deeper on the really important detail – the dual-foundry strategy. Tesla is producing the AI5 simultaneously at TSMC Arizona (Fab 21) and Samsung Taylor (Texas). Both are on advanced nodes (4nm / 2nm class), both are new-build U.S. facilities.

| Item | AI4 (HW4) | AI5 (HW5) |

|---|---|---|

| Perf (Tesla claim) | 1x baseline | 40x |

| Memory | 16GB GDDR6 | 192GB LPDDR5X |

| Foundry | Samsung (S5, Korea) | TSMC Arizona + Samsung Texas |

| Process | 7nm | 4nm / 2nm class |

| Target apps | FSD | FSD + Optimus + Dojo |

| Geopolitics | Korea-dependent | U.S.-only |

Why two fabs for the exact same chip? Supply chain hedging. The AI4 was single-sourced at Samsung Korea, and when Samsung's Pyeongtaek site had yield issues in late 2024 Tesla's entire FSD rollout slipped. The AI5 approach – same design, two independent fabs – means a single fire, earthquake, or trade dispute can't kill the production line. This is a lesson Apple learned years ago (dual-sourcing the A-series at TSMC and Samsung for a while), but almost nobody in the automotive world has executed it.

CHIPS Act alignment and political tailwinds

The second angle is political. Producing in the U.S. immediately unlocks CHIPS Act subsidies. Both TSMC Arizona and Samsung Taylor are major beneficiaries. Tesla gets tax credits for being a U.S.-manufactured-silicon customer, and the Trump administration's tariffs on Chinese/Korean imported chips do not apply. Put simply – Tesla just moved its AI stack onto U.S. soil overnight.

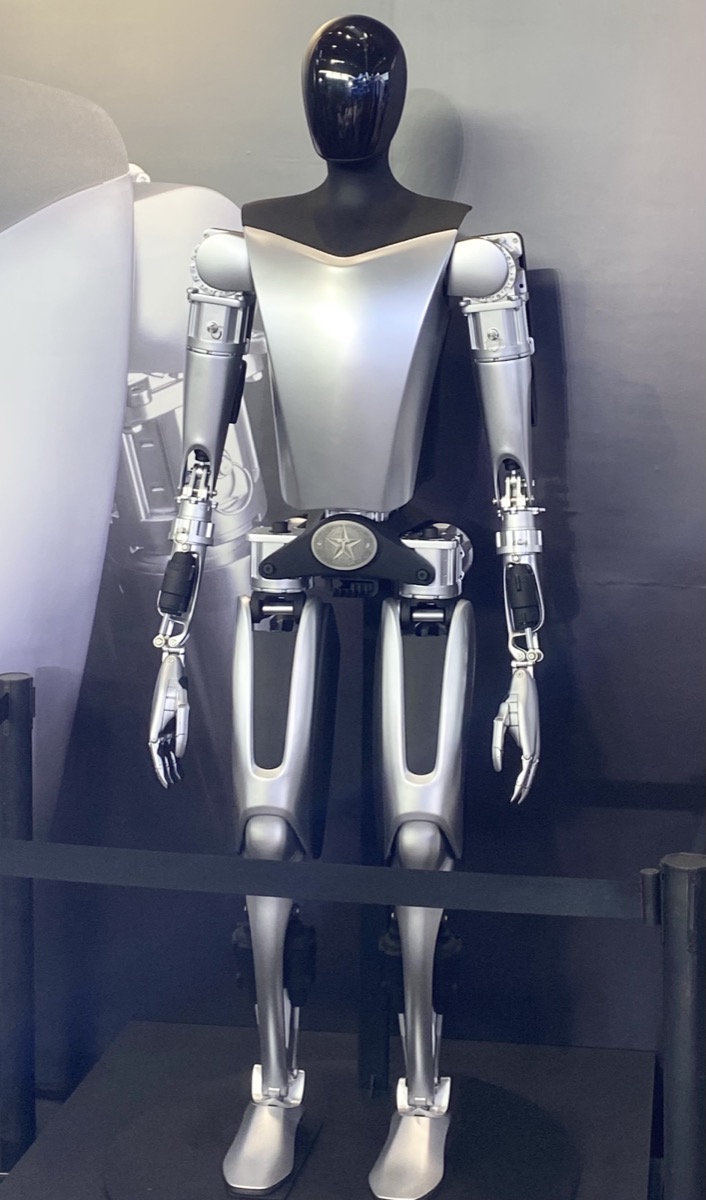

One chip, three products

The third angle is Optimus and Dojo. Musk has repeatedly said the AI5 is shared across vehicles, humanoids, and the training cluster. That means Tesla isn't building three separate chips for three separate products – it's building one SoC and flipping the configuration depending on context. For Optimus, 192GB of memory lets the robot run a full-scale visuomotor foundation model locally. For Dojo, clusters of AI5s become a server-grade training node. That consolidation is economically enormous because the per-chip cost drops at volume.

The roadmap — AI5, AI6, AI6.5, Dojo 3

Musk used the tape-out announcement to trail a longer roadmap, and the details matter for judging how serious Tesla is.

| Milestone | Timing | Notes |

|---|---|---|

| AI5 tape-out | 2026-04-15 | Announced |

| AI5 engineering samples | 2026 H2 | KR2613 marking, Samsung SF2T |

| AI5 volume production | Mid-2027 | TSMC Arizona + Samsung Texas |

| First AI5-equipped vehicle | Late 2026 | Cybertruck refresh, Model S Plaid AI5 (rumored) |

| AI6 tape-out | Dec 2026 target | Originally Samsung SF2 |

| AI6.5 tape-out | 2027 (rumored) | Shifted to TSMC — Samsung yield hedge |

| Dojo 3 deployment | 2027–2028 | AI5 cluster-based |

Engineering samples of AI5 are expected in H2 2026. Volume production has slipped to mid-2027 — nearly two years later than Musk's original 2024 promise of "AI5 vehicles by end of 2025." AI5-equipped vehicles, per current rumors, will debut in a late-2026 Cybertruck refresh and a Model S Plaid AI5 variant. The Cybercab launching in Q2 2026 will still ship on AI4.

AI6 is already in design, with tape-out targeted for December 2026. TrendForce reports that Samsung was originally the sole foundry for AI6 using the SF2 node, but Tesla has now spun up a separate variant called AI6.5 that will be produced at TSMC. The implication — Tesla is hedging against Samsung 2nm yield issues, which are reportedly sitting at 50-55%. AI5 already benefits from Samsung's custom SF2T process that was originally scoped for AI6.

Dojo 3 — the next-generation training cluster — is confirmed in design, scheduled to use clusters of AI5 chips rather than a dedicated data-center SoC. This is the direction Tesla has been moving toward for two years. Instead of building two chips (one for cars, one for training), build one and scale it.

Risks and why the 40x claim needs pressure-testing

Credit where due — tape-out is a real milestone. But several things deserve skepticism.

The 40x figure is a Musk claim, not a third-party benchmark. Historically, Musk's performance numbers run 1.5-2x ahead of what actually ships. HW4 was promised at "5x HW3" and delivered closer to 3x in independent measurements. A more conservative forecast puts AI5 at 15-25x real-world AI4 throughput — still huge, but nothing like 40x.

Then there's execution risk. AI5 is nearly two years late already. Samsung's SF2T process is "customized for Tesla" — which means yield curves that nobody has seen in volume yet. TSMC Arizona has been stable but lags TSMC Taiwan on yield maturity. Either fab could hit issues in 2026-2027 that push volume production further.

Geopolitically, the U.S.-only production story isn't fully insulated either. SK Hynix supplies the LPDDR5X memory, and SK Hynix's leading-edge DRAM is made in Korea. Samsung LPDDR5X is also partly Korean-sourced. "U.S.-made" describes the logic die, not the full package. A Korean supply disruption still affects AI5 output.

Finally, regulatory and legal. If AI5-equipped FSD still requires driver supervision, the 40x hardware advantage buys Tesla little on the robotaxi monetization story. NHTSA's ongoing FSD investigations and California DMV rulings are more binding constraints than silicon performance. Hardware alone doesn't crack L4.

The bigger picture

This story is part of a much larger trend – every AI company is now a semiconductor company. Google with TPU, Amazon with Trainium/Inferentia, Microsoft with Maia, Meta with MTIA, OpenAI reportedly with Broadcom, and now Tesla pushing harder than anyone on vertical integration. They're not just making their own chips – they're making them in their own country, at their own chosen fabs, for their own specific workloads.

The reason is simple. Nvidia's H100s and B200s are great, but they cost a fortune and lead times run 6-12 months. An in-house chip optimized for one workload gives you 10-30x better performance-per-dollar, plus priority supply. This is what Google figured out with TPU a decade ago, and everyone else is now chasing.

Tesla's specific twist is that it's also putting the same chip in millions of cars. That means massive production volume, which drives node yield maturity, which feeds back into the Dojo training side. Circular advantage – the car fleet subsidizes the training stack. Nobody else has this flywheel.

From a geopolitics perspective, the AI5 is a textbook example of the CHIPS-Act-era "America-first AI" strategy. TSMC Arizona and Samsung Texas exist largely because of federal subsidies – roughly $6.6B for TSMC Arizona and $6.4B for Samsung Texas. The Taylor fab, which had suffered from delay after delay, now has a confirmed lead customer at volume. That's a political win that echoes through Commerce Department press releases for years.

The final angle is what this does to competitors. Waymo still uses Nvidia-based compute. Cruise (when it operated) used Nvidia. Mobileye ships EyeQ. None of them have 192GB of memory on-device, and none of them have a dual-fab U.S. supply chain. If Tesla's FSD v14/v15 – running on AI5 – actually delivers robotaxi-grade reliability, the competition's architecture starts to look dated overnight.

Source: commons.wikimedia.org · Public domain

Source: commons.wikimedia.org · Public domain

So what actually changes

For the auto industry – this accelerates the "hardware gap" between Tesla and everyone else. A Ford F-150 Lightning or Hyundai Ioniq 6 now faces a competitor whose in-car compute is literally 40x more capable. Even if Tesla's FSD falls short of full L4, the spec gap is real and visible. Shopping comparisons will have a hard time ignoring it.

For the chip industry – Tesla just became one of TSMC Arizona's most important customers, and the single most important customer for Samsung Taylor. This reshapes U.S. fab economics. Other AI companies will look at Tesla's dual-sourced model as a template. Expect Anthropic, OpenAI, and Meta to follow something similar over the next 18 months.

For consumers – there's a direct subscription story. If AI5-equipped vehicles launch (rumors point to a Cybertruck refresh or a Model S Plaid AI5 variant in late 2026), and FSD actually works the way the 40x number suggests, Tesla's FSD subscription revenue – currently around $99/month – could spike 2-3x. That's the monetization case Musk has been pitching for five years.

For Optimus and robotics – this is the quieter but potentially bigger deal. 192GB of memory means you can run a multimodal foundation model locally on the robot, with vision, audio, and motor control in one pass. Figure, 1X, Unitree, and every humanoid startup is watching. If Tesla ships Optimus V3 on AI5 with real-world generalist skills, the price-performance baseline for humanoid compute gets redefined immediately.

References

출처

관련 기사

The AI Chip Supply War — Tesla, ASML, Huawei, FluidStack Moved in One Week

Samsung Crosses $1T as KOSPI Tops 7,000 — The Real Reason Behind a Single-Session 15% Rip

Meta Unveils 4 Generations of MTIA Custom Chips — Building an Nvidia-Free Inference Stack

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.