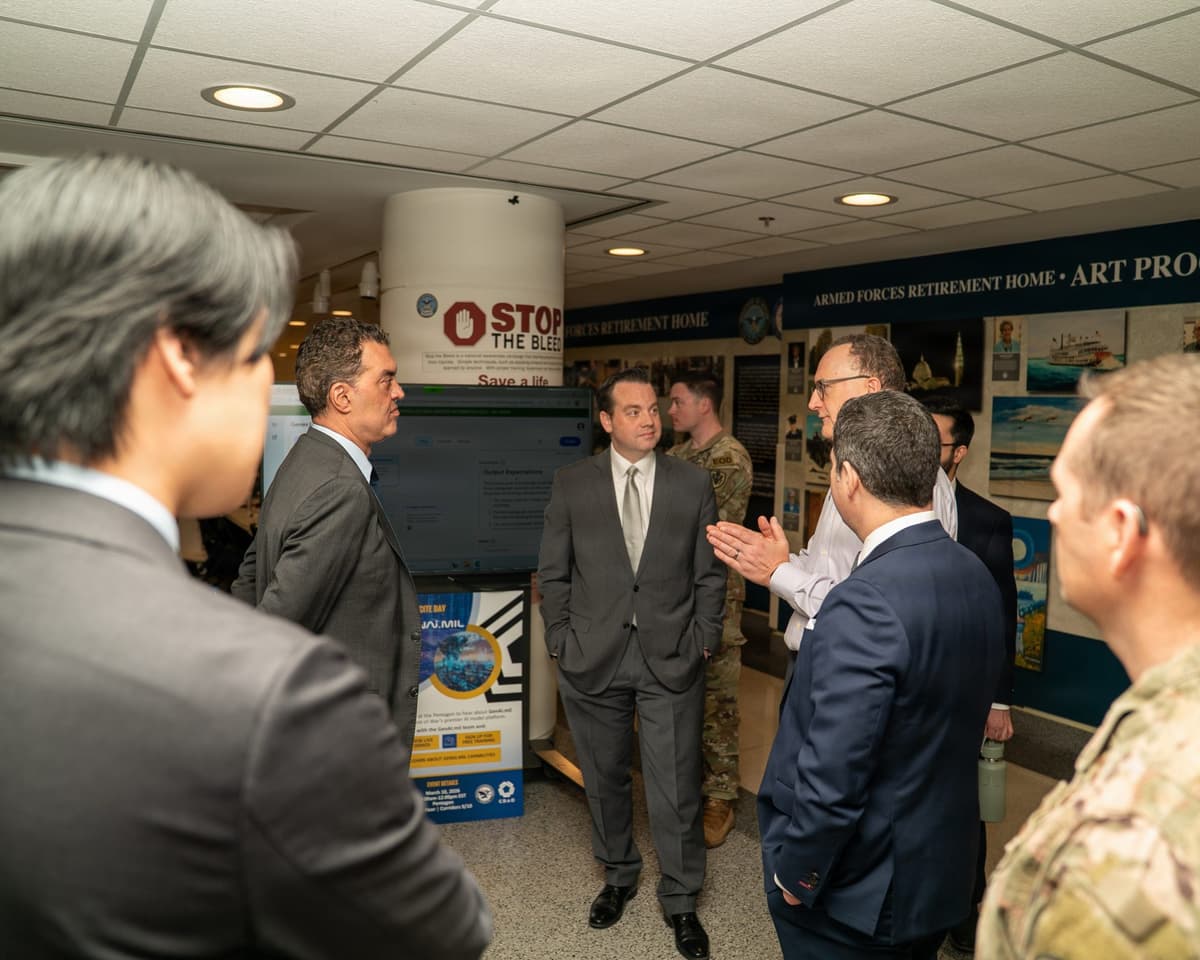

The Pentagon Just Signed Classified AI Deals with 8 Companies. Anthropic Wasn't One of Them.

The DoD inked IL6/IL7 classified AI contracts with OpenAI, Google, Microsoft, and five others — while keeping Anthropic locked out over a 'supply chain risk' label tied to its refusal of an 'all lawful purposes' clause.

Blacklisted

On May 1, the U.S. Department of Defense announced IL6 and IL7 classified AI contracts with eight companies: OpenAI, Google, Microsoft, AWS, Nvidia, SpaceX, Reflection AI, and Oracle. One major AI lab is conspicuously absent. Anthropic, the company that has arguably done more than any other to champion AI safety, has been formally excluded from the Pentagon's most sensitive AI work. The reason isn't a technical failure or a security breach. It's a contract clause.

The same week this news dropped, reports surfaced that Anthropic is weighing a funding round that would value the company at $900 billion. So we have a company that might become the most valuable AI startup in history, yet it can't get a seat at the table where its own government decides how AI will be used in classified operations. That tension tells you something important about where the AI industry is heading.

What IL6 and IL7 Actually Mean — The Pentagon's Classification Tiers for AI

If you're not steeped in defense procurement, the "IL6/IL7" labels probably mean nothing. They matter a lot here, so let's break them down.

The Defense Information Systems Agency (DISA) assigns Impact Levels (IL) to cloud environments based on the sensitivity of data they're authorized to handle. The scale runs from IL2 (public, non-sensitive information) through IL7. Most commercial cloud services operate at IL2. Controlled Unclassified Information (CUI) sits at IL4 and IL5. A lot of government contractors work at these levels without too much friction.

IL6 is where things get serious. An IL6-authorized environment can process classified data up to the Secret level. Think operational military plans, intelligence assessments, weapons system specifications. The security requirements jump dramatically: air-gapped or heavily segmented networks, cleared personnel, physical facility inspections, and continuous monitoring. Getting IL6 authorization is a multi-year, multi-million-dollar process for most companies.

IL7 goes further. It covers Top Secret and mission-critical national security information. Nuclear operations, strategic intelligence, Special Access Programs (SAPs). At this level, the government doesn't just audit your technology stack — it audits your entire supply chain, your investor base, and the nationality composition of your workforce. It's the highest commercially accessible classification tier, and very few companies have ever operated at this level.

What the DoD did on May 1 is essentially declare that large language models are now cleared to operate inside these classified environments. AI will be doing real-time intelligence analysis, threat detection, operational planning support, and document synthesis on networks that handle some of America's most sensitive secrets. That's a paradigm shift, and the companies on the contract list are the ones who will build that future.

The Eight Companies — Who Got In and Why

The roster of eight tells a story about what the Pentagon values in an AI partner.

| Company | Primary Role | Defense Track Record |

|---|---|---|

| Microsoft | Azure Government Secret/Top Secret cloud + AI integration | JWCC contract holder, existing IL6 authorization |

| Vertex AI classified deployment, Gemini models | Returned to defense work post-Project Maven | |

| AWS | GovCloud IL6 infrastructure + Bedrock AI services | Operates CIA's C2E cloud contract |

| OpenAI | GPT-series model deployment on classified networks | First classified contract since 2024 policy reversal |

| Nvidia | AI training/inference GPU hardware + software stack | De facto standard for DoD AI infrastructure |

| SpaceX | Starshield satellite comms + edge AI deployment | Existing NSSL launch contract holder |

| Oracle | OCI Government cloud + database services | Long-running DoD ERP systems operator |

| Reflection AI | Next-generation AI models (in development) | New entrant, no commercial model shipped |

The big three cloud providers — Microsoft, AWS, and Oracle — are expected names. They already run significant portions of the government's IT infrastructure. Google's inclusion is notable mainly for what it represents: the full rehabilitation of a company that walked away from military AI in 2018 after the Project Maven employee revolt. That chapter is officially closed.

OpenAI's presence is the most striking evolution. As recently as January 2024, OpenAI's usage policy explicitly prohibited military applications. The company removed that restriction, accepted the "all lawful purposes" language the Pentagon requires, and two years later it's sitting at the classified AI table. Sam Altman's pragmatic turn has paid off in the most concrete way possible.

SpaceX makes sense from an edge-deployment perspective. If you want to run AI models on a forward-deployed military network, you need satellite connectivity that doesn't depend on terrestrial infrastructure. Starshield provides that. Nvidia is simply irreplaceable on the hardware side — every model these companies deploy will almost certainly run on Nvidia silicon.

Then there's Reflection AI. We'll come back to that one.

Why Anthropic Got Frozen Out — The Full Story Behind 'Supply Chain Risk'

The chain of events that led to Anthropic's exclusion is worth tracing carefully, because it reveals a fundamental clash between AI safety principles and national security procurement logic.

In 2025, the DoD began requiring AI service providers to sign contracts containing an "all lawful purposes" clause. This language gives the Pentagon broad discretion to use the AI for any legally authorized purpose — including lethal autonomous systems support, targeting assistance, and integration with weapons platforms. The clause doesn't mandate those uses, but it doesn't exclude them either. It's a blank check on usage rights.

Anthropic refused to sign. The company's Acceptable Use Policy prohibits using its AI to "cause serious harm to people" and places explicit restrictions on autonomous weapons applications. Anthropic's position was that signing the clause would effectively nullify its safety policy — the very thing that differentiates it from competitors.

In early 2026, the DoD formally classified Anthropic as a "supply chain risk." The label didn't stem from a technical vulnerability assessment or a counterintelligence concern. It was a procurement decision: a company that won't guarantee unconditional cooperation on usage terms is, from the Pentagon's perspective, an unreliable supplier. If Anthropic might refuse to support a specific use case mid-contract, that creates operational risk.

Anthropic responded by filing a federal lawsuit against the Department of Defense. The complaint makes two central arguments. First, that the "supply chain risk" classification is arbitrary and not grounded in factual risk assessment. Second, that it effectively constitutes a permanent ban from defense AI contracts, depriving Anthropic of property rights and market access without due process.

The lawsuit is active. The DoD signed the eight-company contracts anyway.

Here's a timeline:

| Date | Event |

|---|---|

| January 2024 | OpenAI removes military use prohibition from its policy |

| H1 2025 | DoD requires "all lawful purposes" clause from AI vendors |

| H2 2025 | Anthropic refuses to sign the clause |

| Early 2026 | DoD classifies Anthropic as "supply chain risk" |

| March 2026 | Anthropic files federal lawsuit against DoD |

| April 19, 2026 | Axios reports NSA is already running Anthropic's Mythos Preview |

| May 1, 2026 | DoD signs IL6/IL7 contracts with 8 companies, Anthropic excluded |

The irony is hard to miss. The company that has invested more in AI safety research than any of its competitors — Constitutional AI, Responsible Scaling Policy, interpretability research — is the one labeled a national security risk. Not because its technology is unsafe, but because it insists on limiting how its technology can be used.

The Mythos Paradox — NSA Uses It, DoD Won't Touch It

This is where the story gets genuinely strange. On April 19, Axios reported that the NSA is already running Mythos Preview, Anthropic's specialized cyber defense model. Mythos is designed for network intrusion detection, threat analysis, and vulnerability assessment. By most accounts, it's best-in-class for these applications.

So within the same government, the NSA — an agency that operates at the highest possible classification levels — is actively using an Anthropic model, while the DoD has labeled Anthropic a supply chain risk and locked it out of classified AI contracts.

Pentagon CTO Emil Michael addressed this contradiction in a May 1 CNBC interview. His answer: "The blacklist holds, but Mythos is a separate issue." Translation: the DoD won't accept Anthropic as a full partner, but it's willing to make exceptions when a specific Anthropic tool is too good to ignore.

This creates several interesting dynamics. First, it suggests internal disagreement within the defense establishment. Cyber defense operators who rely on Mythos probably aren't thrilled that institutional politics are threatening their access to a critical tool. Second, it gives Anthropic powerful ammunition in its lawsuit. If the NSA trusts Anthropic's technology on its most sensitive networks, the "supply chain risk" label starts to look less like a genuine security assessment and more like a punishment for non-compliance with contract terms.

Third, it exposes a philosophical incoherence in the government's position. You can't simultaneously argue that a company poses a supply chain risk to national security while deploying that company's model inside your signals intelligence agency. Well, you can argue it — Emil Michael just did — but it's not a position that holds up well under judicial scrutiny.

Reflection AI's Surprise Inclusion — A Contract Before a Model

The most puzzling name on the eight-company list is Reflection AI. Founded in 2025, the company is reportedly in negotiations for a $25 billion valuation. It has not shipped a commercial model. There are no public benchmarks, no customer case studies, no third-party evaluations of its technology.

Yet it secured an IL6/IL7 classified AI contract alongside Microsoft, Google, and OpenAI.

The most charitable interpretation is that the Pentagon is placing a strategic bet. Reflection AI's founding team presumably includes people with strong defense or intelligence backgrounds, and the DoD wants to lock in access to whatever they build before the commercial market prices it beyond reach. Government procurement often works this way — you contract early with promising technology providers to ensure priority access.

But the contrast with Anthropic is brutal. Claude is one of the most capable and widely deployed frontier models in the world. Anthropic has millions of users, enterprise customers, and a published safety research track record. It was excluded. A company with no model, no customers, and no public track record was included. The message is clear: the Pentagon's selection criteria aren't about technical capability. They're about willingness to accept the government's terms without conditions.

Stakes — Winners, Losers, and the Watch List

Winners. OpenAI comes out ahead more than anyone else. The company's 2024 policy reversal — dropping the military use prohibition — was widely criticized at the time. Two years later, it has translated directly into classified AI contracts that most companies can only dream of. Google's defense rehabilitation is complete. Nvidia's position as the infrastructure backbone of government AI gets even more entrenched.

SpaceX opens up a new vector: space-based AI deployment for military applications. The fact that Elon Musk entered this contract through SpaceX rather than xAI is tactically interesting — it suggests a deliberate separation between infrastructure and model businesses.

Losers. Anthropic's immediate revenue impact is probably manageable. The bigger problem is the signal. Being frozen out of U.S. classified AI contracts tells the market that this company doesn't have the trust of its own government. That's uncomfortable when you're trying to raise at a $900 billion valuation. Investors will inevitably ask: if the Pentagon doesn't trust Anthropic, should we?

The counter-argument exists: Anthropic's consumer and enterprise business is entirely separate from defense, and its principled stance on AI safety could be a long-term brand asset. But in the short term, this hurts.

Watch list. Reflection AI now has to deliver. Getting a classified AI contract without a model is a remarkable achievement, but it's also a clock that starts ticking. If Reflection can't produce an IL6/IL7-capable model within a reasonable timeframe, the DoD's judgment will be questioned.

Anthropic's lawsuit is the other variable. If a federal court rules that the "supply chain risk" classification was arbitrary, the DoD may be forced to revisit the entire contract structure. That outcome isn't likely in the near term, but it's not impossible either.

The Skeptics — "The Price of Principle" vs. "The Decision You Brag About in 30 Years"

Opinion on Anthropic's stance splits cleanly into two camps.

Defense hawks and procurement veterans see it as naive idealism with real consequences. The logic is straightforward: if you want government contracts, you play by government rules. Your internal AI safety policy doesn't override national security requirements. Companies that understood this — OpenAI, Google, Microsoft — are now building the classified AI infrastructure of the United States. Anthropic chose principle over pragmatism, and it's paying the price.

AI safety researchers and civil liberties advocates see it differently. The argument goes something like this: deploying AI for "all lawful purposes" in a military context means potentially enabling autonomous targeting, surveillance at scale, and weapons systems integration. A company that builds frontier AI models has a responsibility to set boundaries on their use, even when — especially when — the customer is the most powerful military on Earth. If Anthropic folds on this, the concept of responsible AI development becomes meaningless.

Both perspectives have merit. The Pentagon's position that it can't rely on an AI provider who might refuse to support a lawful mission is operationally rational. Anthropic's position that blanket usage authorization undermines the entire framework of AI safety governance is philosophically coherent. This isn't a case where one side is clearly wrong. It's a structural tension that the AI industry hasn't resolved, and maybe can't resolve within existing frameworks.

The question for the next decade is whether Anthropic's stance becomes a competitive disadvantage that slowly erodes its position, or a founding principle that eventually reshapes how governments procure AI. History tends to be kind to companies that held lines. But history is written by survivors, and surviving as a $900 billion company without government trust is a harder path than it looks.

What to Do Monday Morning

If you're a developer or engineer: The defense AI market just got a lot more concrete. If you're interested in this space, start by understanding FedRAMP High authorization, DISA STIGs, and the architecture differences between commercial and government cloud environments (Azure Government, AWS GovCloud). Experience building in these environments is a genuine differentiator and one that's in short supply.

If you're running projects that depend on Anthropic's Claude API and your work touches government or defense, build contingency plans now. Commercial API access isn't affected today, but regulatory environments can shift.

If you're a startup founder: This contract round confirmed that accepting the "all lawful purposes" clause is effectively a prerequisite for defense AI work. If government contracts are part of your business plan, factor this into your acceptable use policy from day one. Retrofitting later is costly — just ask Anthropic.

There's also an opportunity angle. The DoD clearly wants a diverse set of AI suppliers. If you can navigate the IL6 authorization process and build defense-relevant capabilities, the market is open and actively looking for new entrants. Reflection AI's inclusion proves that you don't even need a shipping product to get a foot in the door, though you will need to deliver eventually.

If you're an investor: Quantify what defense market exclusion means for Anthropic's total addressable market. U.S. defense AI spending is estimated at roughly $15 billion annually in 2026. That's a meaningful revenue stream with high margins and long contract durations. Anthropic walking away from it — or being walked away from it — is a material consideration at a $900 billion valuation.

On the flip side, monitor the lawsuit. A favorable ruling could reopen the entire defense AI market to Anthropic and potentially set precedent about how governments can condition AI procurement on usage terms. Price both scenarios.

Sources

| Publication | Title | Link |

|---|---|---|

| DefenseScoop | DoD Expands Classified AI Work with 8 Companies, Excluding Anthropic | defensescoop.com |

| CNBC | Pentagon Anthropic Blacklist: Mythos Is "Separate Issue," Says Emil Michael | cnbc.com |

| Breaking Defense | Pentagon Clears 7 Tech Firms to Deploy Their AI on Its Classified Networks | breakingdefense.com |

| Federal News Network | DoD Strikes Deals with Major Tech Firms to Deploy AI on Classified Networks | federalnewsnetwork.com |

| Defense News | Pentagon Freezes Out Anthropic as It Signs Deals with AI Rivals | defensenews.com |

관련 기사

Enterprise AI Just Locked In — Stellantis, Pentagon, and HumanX Made It Official

Stellantis–Microsoft's 5-year, 100-tool partnership. Google–Pentagon's classified Gemini deal. The Claude mania at HumanX 2026. Three signals in one week that AI moved from pilot to multi-year line item.

The Pentagon Just Brought Frontier AI Inside Classified Networks — Seven Firms, One Day

The U.S. Department of Defense has signed deals with seven leading AI firms — Microsoft, AWS, Google, Anthropic, OpenAI (via MSFT), Palantir, Scale — to deploy their models inside classified Pentagon networks. Defense AI just moved from admin work to intelligence and command.

OpenAI Hits $25B Revenue, Eyes IPO — The AI Monetization Inflection Point

OpenAI has surpassed $25B in annualized revenue and is exploring a public listing for late 2026. Anthropic approaches $19B. The debate over whether AI makes money is officially over.

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.