Revolut Trained an AI on 40 Billion Banking Events. Here's What It Learned.

Revolut published PRAGMA, a foundation model trained on 40 billion financial events from 25 million users. It improves fraud detection by 20% and handles credit scoring, LTV prediction from a single pre-trained base.

40 Billion Events, One Language

40 billion events. That's what Revolut fed into PRAGMA, its in-house foundation model. Converted to tokens, that's roughly 207 billion — the same order of magnitude as GPT-3's original training corpus.

Every transfer, payment, currency exchange, investment, and subscription from 25 million users across 111 countries, over several years, treated as a single massive corpus. The way GPT reads internet text, PRAGMA reads the flow of money.

The paper dropped on arXiv on April 9, and it matters for one reason: this is the first publicly documented case of a bank building its own foundation model and deploying it in production. Reported gains over production baselines include +130.2% in credit-scoring PR-AUC and +64.7% in fraud-detection recall.

Why This Paper Lands Now

Banking AI has lived inside a "rules engine + gradient boosting" cage for years. One bespoke model per task, one bespoke feature set per task, hand-engineered by a separate team. Inside Revolut, fraud detection, credit scoring, and churn prediction all ran on completely separate pipelines.

The problem is that this approach stopped scaling. Revolut's user base has grown past 25 million into the tens of millions, and the product surface expanded from card payments into crypto, stocks, and insurance. More tasks, more features, more bespoke engineering — eventually the team breaks.

NLP already solved this problem. Pre-train one model well, then serve dozens of downstream tasks with the same embeddings. PRAGMA is the first public attempt to port that playbook into banking event data at production scale.

Source: commons.wikimedia.org · CC-BY-SA 4.0

Source: commons.wikimedia.org · CC-BY-SA 4.0

Method Breakdown

Approach — Treating Each Transaction as a Sentence

PRAGMA's core idea is Key-Value-Time (KVT) tokenisation. The way a text LLM breaks words into tokens, PRAGMA decomposes each transaction into three components: what it is (Key), how much (Value), and when (Time).

Take "Starbucks card payment $6.50 on April 10 at 15:23." The Key is one of roughly 60 tokens representing the field's semantic type. The Value is encoded via percentile buckets for numerics, or BPE subwords (~28k vocab) for text. The Time is log-seconds since the previous event plus cyclical features (hour, day-of-week, day-of-month).

This lets PRAGMA learn both "this user pays on Tuesday mornings" and "this user pays on weekends" from the same sequence. Time features that older GBDT pipelines had to hand-engineer now fall out of the architecture.

Core Technique — Three-Stream Encoder with Masked Modelling

The model has three encoder branches. A profile-state encoder processes static user attributes (country, join date, premium tier) with RoPE positional encoding. An event encoder embeds each transaction independently. A history encoder contextualises their concatenated output.

Pre-training uses masked language modelling at three granularities simultaneously: token-level (15%), event-level (10%), and semantic-type-level (10%). Forcing the model to solve "predict the amount of Thursday's Starbucks charge" and "predict what happened on Wednesday afternoon" in parallel prevents overfitting to any single pattern.

| Model Size | Parameters | Training GPUs | Use Case |

|---|---|---|---|

| PRAGMA-S | 10M | — | Real-time fraud detection (ultra-low latency) |

| PRAGMA-M | 100M | 16× H100 | Credit scoring, cross-sell prediction |

| PRAGMA-L | 1B | 32× H100 | Precision analysis (latency-tolerant tasks) |

All three share the same pre-trained weights and are fine-tuned per task. It's the "one base model, many applications" strategy that works so well in NLP, transplanted into finance.

Results — Six Tasks, Baseline Beaten Everywhere

The paper benchmarks PRAGMA against production baselines across six real Revolut tasks. A simple linear probe on top of PRAGMA embeddings wins every one.

| Task | Metric | Lift vs. Baseline |

|---|---|---|

| Credit Scoring | PR-AUC | +130.2% |

| Communication Engagement | PR-AUC | +79.4% |

| External Fraud | Recall | +64.7% |

| External Fraud | Precision | +16.7% |

| Product Recommendation | mAP | +40.5% |

| Recurrent Transactions | F1 | +5.8% |

| Lifetime Value | PR-AUC | +1.8% |

The 130.2% credit-scoring improvement stands out. Traditional credit scoring leans on structured signals — credit scores, income, debt ratios. PRAGMA adds behavioural data: how someone actually spends money, savings rhythms, subscription management. The embedding captures what a rulebook can't.

Fraud detection's 64.7% recall jump matters too. Rule-based systems collapse the moment a fraudster learns the rules. PRAGMA asks "does this transaction fit this person's normal pattern?" rather than checking a static threshold. Fewer false positives, more real fraud caught.

The key insight: every one of these tasks runs on embeddings from a single pre-trained model. No separate model per task. Stack a simple linear probe on PRAGMA and you get strong performance out of the box.

Source: commons.wikimedia.org · CC-BY-SA 4.0

Source: commons.wikimedia.org · CC-BY-SA 4.0

Limitations — 47.1% Drop on AML

The paper is refreshingly candid about its weaknesses. The biggest one: a 47.1% performance drop on anti-money-laundering (AML) detection versus the baseline.

The authors spell out why. "AML detection is inherently relational: the baseline leverages cross-record features that capture network-level signals. Because PRAGMA processes event histories in isolation, the resulting embeddings do not inherently capture the cross-record dependency structures crucial for this task." Looking at individual user sequences simply isn't enough when the fraud lives in the graph between accounts.

Reproducibility is another caveat. Revolut's 25-million-user transaction log can't be released for privacy reasons. The architecture and techniques are public, but maybe ten organisations on the planet can reproduce the result. Read this paper as an industrial reference implementation, not a reproducible academic benchmark.

Field Context — The Lineage of Finance Foundation Models

Previous attempts to bring foundation models to finance existed. BloombergGPT (2023) pre-trained a 50B-parameter LLM on 363B financial tokens. JPMorgan's IndexGPT (2024) took a similar route. Both built on top of text-based LLMs.

PRAGMA starts somewhere else. Financial event sequences are the native input, not an afterthought bolted onto a text model. It's structurally different. BERT4Rec and other sequence-recommendation papers are its closer cousins, but PRAGMA is orders of magnitude larger in both data and parameter count, and covers a much broader task surface.

| Model | Approach | Training Data | Scale |

|---|---|---|---|

| BloombergGPT (2023) | Text LLM + financial docs | Financial news/reports | 50B params, 363B tokens |

| IndexGPT (2024) | Text LLM + financial QA | Investment advisory text | undisclosed |

| BERT4Rec (2019) | Sequence recommendation | User clicks/purchases | hundreds of thousands of params |

| PRAGMA (2026) | Event-sequence model | 40B transaction events | 1B params, 207B tokens |

The distinction matters. BloombergGPT is "an AI that knows about finance." PRAGMA is closer to "an AI that has experienced finance."

200+ H100 GPUs in Real Production

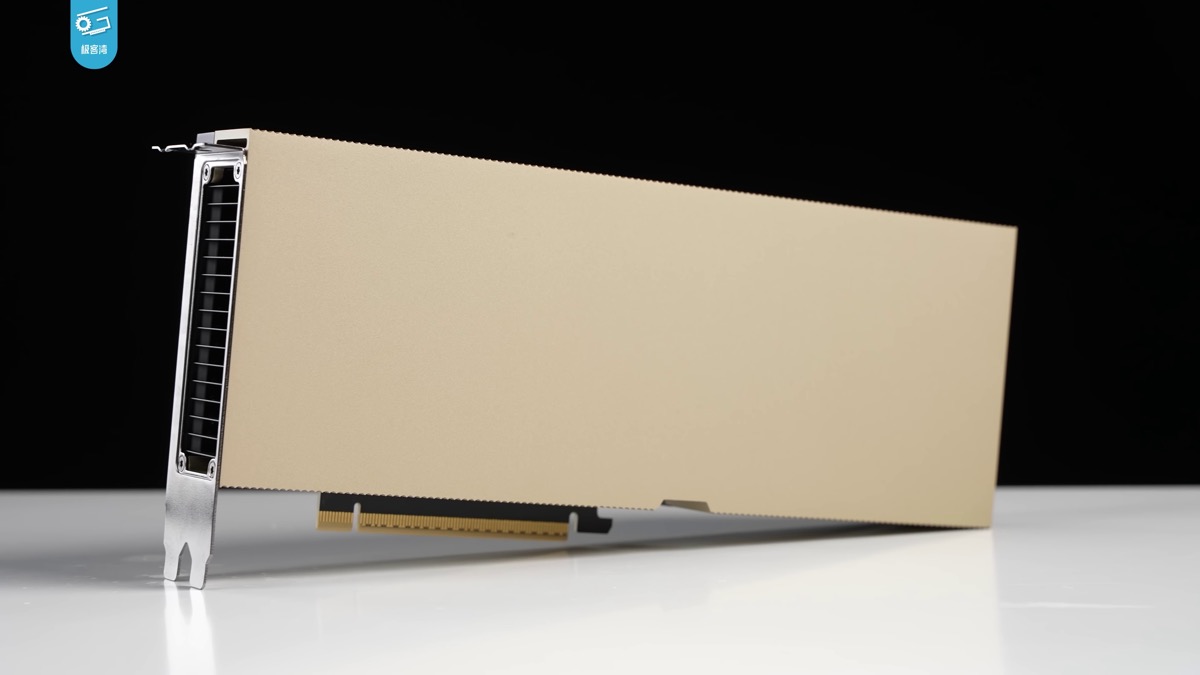

This isn't just a paper. PRAGMA is running in Revolut's production systems right now. The inference stack spans 200+ NVIDIA H100 GPUs and powers AIR (Artificial Intelligence by Revolut), the company's AI assistant currently rolling out to 13 million UK customers.

The infrastructure runs on Nebius (formerly Yandex Cloud), a notable choice — a European fintech using European-based AI cloud infrastructure, which matters for GDPR compliance. The moment data leaves the EU, compliance costs explode.

Source: commons.wikimedia.org · Public Domain

Source: commons.wikimedia.org · Public Domain

What This Means for You

For developers and fintech builders, the PRAGMA paper sends clear signals.

First, domain-specific foundation models have arrived. General-purpose LLMs are powerful, but domains with unique event-sequence data — finance, healthcare EHR, telecom CDR, industrial IoT logs — may be better served by purpose-built models. Any domain with sequence-shaped event data is now a candidate.

Second, data is the moat. Revolut can build this model because it has years of data from 25 million users. No startup, no research lab can replicate that dataset. The real competitive advantage isn't the architecture — it's the corpus. That's why even a fully-published paper is nearly impossible to reproduce outside a handful of institutions.

Third, the approach has holes. AML-style relational tasks remain a structural weakness of per-user sequence models. The next step is almost certainly hybrids: per-user sequence encoders combined with graph neural networks that capture cross-account relationships. PRAGMA is a milestone, not an endpoint.

References

- arXiv: PRAGMA: Revolut Foundation Model (2604.08649)

- arXiv HTML full paper

- Let's Data Science: Revolut Deploys PRAGMA Foundation Model for Finance

- Nebius Customer Story: Revolut on the Inference Frontier

- Fintech Weekly: Revolut Launches AIR AI Assistant to 13 Million UK Customers

- ResearchGate: PRAGMA paper (PDF)

출처

관련 기사

The Week Vertical AI Arrived — GPT-Rosalind, Pragma, Muse Landed Together

Microsoft Just Shipped Its Own Foundation Models

OpenAI Just Bought a 'Personal AI CFO' Startup. It's Their Second Fintech Acquisition in 6 Months.

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.