OpenAI Doubles Down on Cerebras: $20B Deal + Equity Warrant

OpenAI will pay Cerebras $20B+ over three years and can pick up to 10% equity as spend scales. A direct bet on reducing Nvidia dependency — and rewiring inference economics.

$20B. And up to 10% equity.

OpenAI will pay Cerebras at least $20 billion over three years, with total spending potentially reaching $30 billion — and that spend will translate into warrants for up to a 10% equity stake, according to reporting by The Information. A separate $1 billion commitment from OpenAI will help fund Cerebras data center construction. This more than doubles the $10 billion, 750-megawatt deal disclosed in January.

What does it mean?

OpenAI is serious about reducing Nvidia dependency — specifically for inference.

Why this matters — and how we got here

Inference is the real bill

When ChatGPT broke in late 2022, every AI shop obsessed over training. Bigger models, faster — and that required Nvidia H100s by the tens of thousands. By 2025, the story shifted. Models are already huge. The user base exploded. And suddenly the real cost wasn't training. It was inference.

Inference is what happens every time you ask ChatGPT a question: the server runs the model and generates an answer. Training is a one-time capex spike. Inference is a linear cost that grows with every user, every query, every day. For a service with hundreds of millions of weekly users, inference power is a company's largest opex line.

Nvidia H100s and B200s dominate training. But they're overspec for inference. A purpose-built inference chip — one that sacrifices memory bandwidth for throughput — can be much more efficient. Nvidia's silicon was not designed for that trade-off.

What Cerebras actually sells

Cerebras is a 2016 Silicon Valley AI chip startup. Its signature product is the Wafer-Scale Engine — literally a single 12-inch silicon wafer used as one chip. Nvidia's H100 is ~814mm². The Cerebras WSE-3 is 46,225mm². Roughly 57× larger.

Why build a chip that big? To eliminate the bottleneck of chip-to-chip communication. In LLM inference, a huge amount of time is wasted moving data between GPUs. If all compute happens inside one wafer, that traffic disappears.

| Metric | Nvidia H100 | Cerebras WSE-3 |

|---|---|---|

| Die area | 814mm² | 46,225mm² |

| Transistor count | 80B | 4,000B |

| AI cores | 18,432 (Tensor) | 900,000+ |

| On-chip memory | ~50MB | 44GB SRAM |

| Memory bandwidth | 3.35TB/s | 21PB/s |

| Tokens/sec (Llama3-70B inference) | ~30–50 | 1,800+ |

That last row is the point. On Llama3-70B inference, Cerebras WSE-3 is tens of times faster per device than an Nvidia H100. The chip costs tens of times more, too — but on tokens-per-dollar and watts-per-token for inference workloads, Cerebras wins more often than headlines suggest.

The structure of the deal

Three pieces

Break the deal into three parts.

① The $20B+ purchase commitment. OpenAI commits to at least $20B of Cerebras-powered server capacity over three years, scaling toward $30B. This isn't hardware procurement in the traditional sense — it's mostly renting Cerebras-operated data center capacity.

② Equity warrants. As spending grows, OpenAI earns warrants — rights to buy Cerebras equity — up to 10%. This construct is interesting because OpenAI gets to monetize its anchor-customer status, and Cerebras locks in its biggest customer as an equity holder.

③ $1B in data center construction support. A separate commitment of up to $1B from OpenAI to fund Cerebras's buildout — power procurement, land, cooling, the physical stuff.

Why this bundle, now

Consider Nvidia's relationship with OpenAI. Nvidia reportedly committed more than $100B in investment to OpenAI over the last year, and that money ultimately flows back into Nvidia chip purchases. A loop.

For OpenAI, staying inside that loop means no negotiating power. So it's diversifying — Cerebras, Google TPUs (Broadcom-designed), AWS Trainium. This Cerebras deal is the largest single diversification move.

PANews framed the moment as a "war of inference": Nvidia and OpenAI each striking $20B-scale deals to cement inference-infrastructure position.

OpenAI isn't leaving Nvidia. It's building a portfolio. Training stays on Nvidia. Inference splits across Cerebras, Broadcom-based ASICs, and Nvidia. Supplier concentration reduced. Standard stuff.

The Cerebras IPO subtext

This deal lines up with Cerebras's IPO plans. Cerebras tried to go public in 2024 but ran into delays over Chinese investor (G42) equity and U.S. national security review. It scrapped that attempt. In April 2026 it refiled, targeting a Q2 listing at about $35B valuation and a $3B raise.

The OpenAI deal is the centerpiece. An anchor customer with multi-year, multi-billion revenue commitments is exactly the story IPO investors want. Cerebras's last private mark was $23.1B; $35B would be a ~50% markup.

The wider picture: inference chip market goes multi-player

OpenAI's move toward Cerebras signals that the inference chip market is no longer Nvidia-only. Here's the competitive map as of April 2026:

| Company / Chip | Position | Status |

|---|---|---|

| Nvidia B200/GB200 | Training + inference, all-purpose | Market dominant |

| Cerebras WSE-3 | Wafer-scale inference | OpenAI $20B+, IPO Q2 |

| Google TPU v5p | Internal + Anthropic | Broadcom-designed |

| AWS Trainium 3 | Internal + Anthropic JV | 2026 volume |

| Huawei Ascend 950PR | China inference market | Alibaba, Baidu adoption |

| Groq LPU | Ultra-low-latency inference | Meta, DeepSeek partnerships |

| SambaNova SN40L | Enterprise inference | On-prem strength |

| FuriosaAI RNGD | Korean NPU | Commercial ops started |

Everyone's positioning as "inference specialist." Beating Nvidia in training is still very hard. Beating Nvidia on specific inference workloads is a different, more winnable game.

What this means for you

If you're a developer

No immediate change. OpenAI's API endpoints look the same. Over time, though, expect tighter latency and per-token pricing as Cerebras infrastructure comes online. Faster-response tiers (GPT-5 Turbo) are the likeliest short-term winners.

If you're running infrastructure

The evaluation bar for "Nvidia alternatives" just moved. When OpenAI validates Cerebras at this scale, it's a signal for enterprise architects to benchmark alternatives on inference-heavy workloads — chatbots, search, code assistants — where Cerebras or Groq may beat H100 on tokens-per-dollar.

If you're an investor

Nvidia's dominance is starting to show cracks — narrow ones, but real. Nvidia still has 80%+ of AI chip share, but within the inference segment, competitors have room to reach 10–20% share. Broadcom, Cerebras, and the Google/Amazon custom-ASIC efforts are the structural beneficiaries.

Further reading

- OpenAI to spend more than $20 billion on Cerebras chips, receive stake — Manila Times

- OpenAI Partners with Cerebras for $20 Billion Deal, Reducing Nvidia Dependency — GuruFocus

- Two $20 billion deals: OpenAI and Nvidia are waging a "war of inference" — PANews

- AI chipmaker Cerebras files to go public after scrapping IPO plans last year — CNBC

출처

- OpenAI to spend more than $20 billion on Cerebras chips, receive stake (Manila Times)

- OpenAI Partners with Cerebras for $20 Billion Deal, Reducing Nvidia Dependency (GuruFocus)

- Two $20 billion deals: OpenAI and Nvidia are waging a war of inference (PANews)

- AI chipmaker Cerebras files to go public after scrapping IPO plans last year (CNBC)

관련 기사

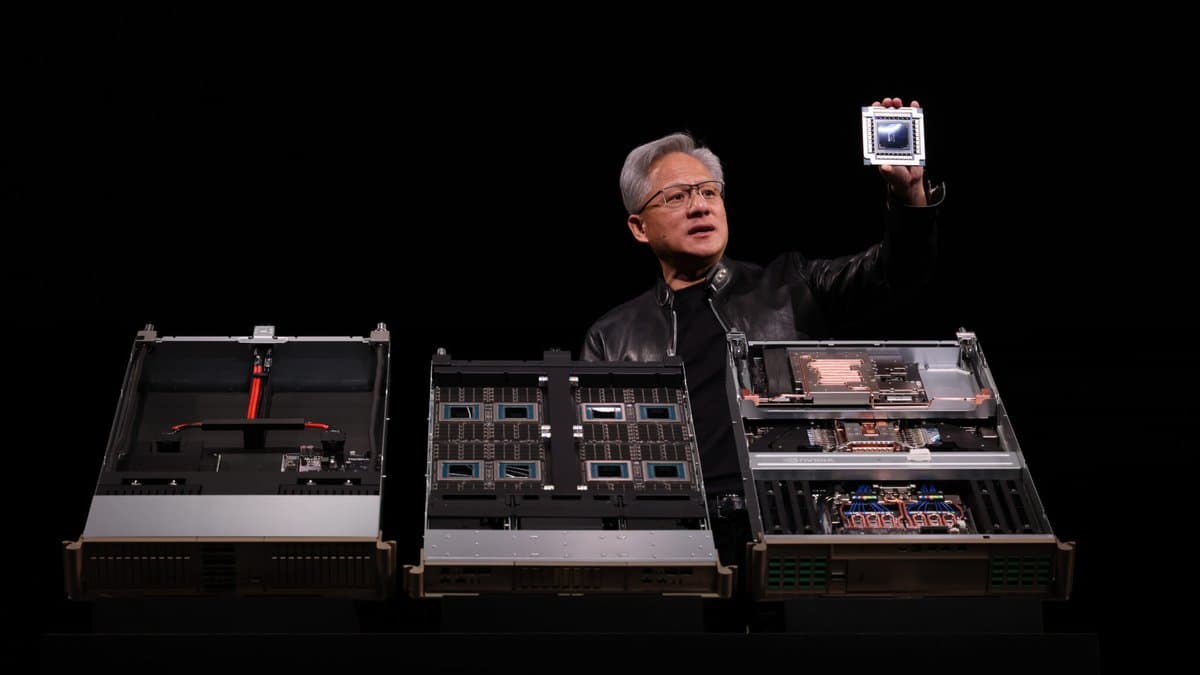

Nvidia GTC 2026: Vera Rubin Unveiled — Complete Technical Breakdown

42.5 ExaFLOPS: Google's Ironwood TPU Rewrites the Inference Playbook

Nvidia Has Already Committed $40B in 2026 — 'Vendor Financing 2.0' Is Buying the Whole AI Stack

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.