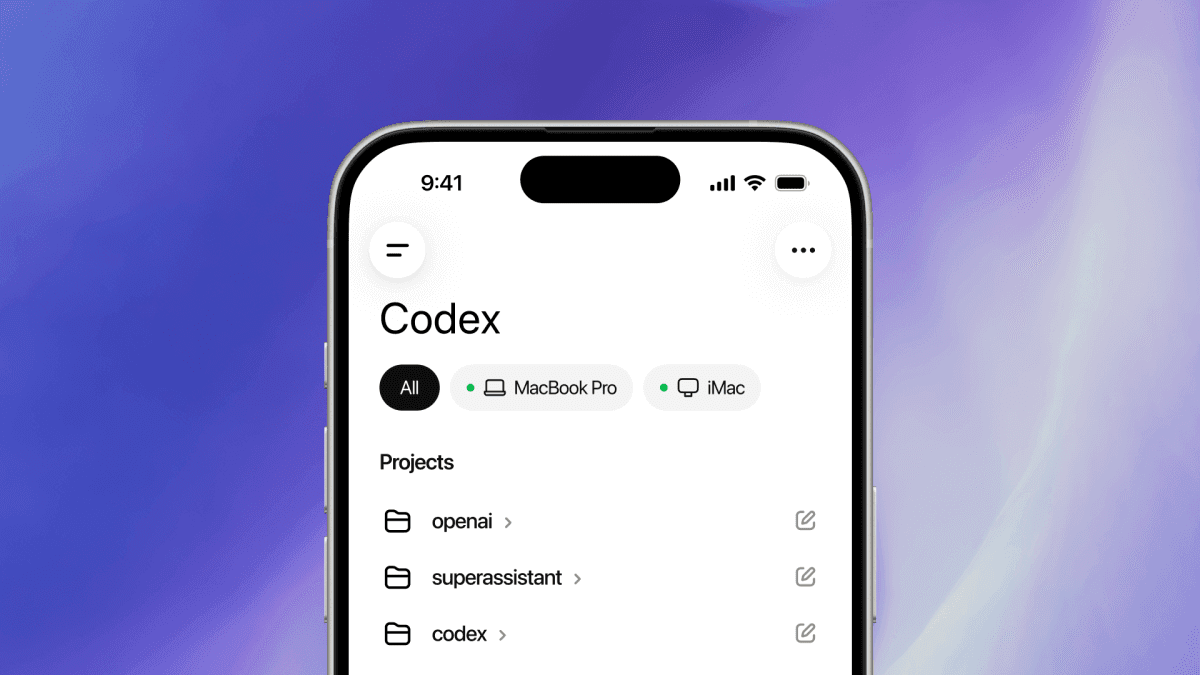

OpenAI Codex Just Got 'Everything Mode' — It Uses Your Computer, Remembers, and Runs for Days

OpenAI rolled Codex into an Everything Mode that unifies computer-use, long-horizon memory, and a multi-tool agentic loop. This is not code generation anymore — it is project-level operations running for days at a time.

Days

Codex Everything Mode can hold a single task for days, OpenAI announced today. This is no longer code generation — it is project-level operations. Processing 50 GitHub issues, producing 20 refactor PRs, chasing CI failures, upgrading dependency trees. All in a single continuous session.

What is new here: agentic coding has so far been stuck in "one prompt, one result." Everything Mode is the first commercial agent that breaks out of that loop.

The context you need

OpenAI Codex originally shipped in 2021, was deprecated in 2023, and relaunched in 2024 on top of GPT-5. The relaunch was rough — Claude Code and Cursor dominated early. Two reasons. First, Claude consistently led SWE-bench Verified, the industry benchmark for agentic coding. Second, Cursor's IDE integration was noticeably more developer-friendly.

OpenAI rebuilt Codex end-to-end in the back half of 2025. The new GPT-5 Turbo-based Codex nudged slightly ahead of Claude Sonnet 4.5 and 4.6 on SWE-bench Verified, and OpenAI shipped its own IDE called Codex Studio to compete with Cursor. Even so, "credible Claude Code alternative" was a label it never quite earned.

Source: unsplash.com · Unsplash License

Source: unsplash.com · Unsplash License

Everything Mode is the move to change that. Instead of competing on raw code generation, it reframes the category as long-running agents.

Breaking down the core

Computer-use, integrated

The first pillar is computer-use integration. Same lineage as Claude Computer Use and OpenAI's own Operator, but this time deeply wired into an IDE environment. Codex can open a browser to read documentation, check a dashboard, move a ticket in a project management tool.

Concrete examples:

- GitHub Actions fails, Codex opens the Actions tab, reads the log, finds the cause, and opens a fix PR.

- A Sentry error fires, Codex opens the Sentry console, reads the stack trace, navigates to the file, and patches it.

- A Figma design arrives, Codex opens the Figma link, reads the layout, and ports it to a React component.

The point is not that an API call got replaced — it is that tools with no API are now in play.

Long-horizon memory

The second pillar is memory. Codex keeps project-scoped long-horizon memory. Here is the publicly documented memory stack:

| Layer | Lifetime | Purpose | Example |

|---|---|---|---|

| Session memory | Current session | Single-task context | Files currently being refactored |

| Project memory | Until project deletion | Codebase knowledge | Architecture decisions, team conventions |

| User memory | Account lifetime | Developer preferences | Language, style, review patterns |

| Global memory | Contributes to OpenAI training | Improvement data | Opt-in only |

Project memory is the key layer. Tell it once — "our team doesn't use Tailwind, only vanilla CSS" or "DB migrations always go in migrations/ with a numeric prefix" — and every subsequent session follows the convention automatically.

Multi-tool agentic loop

The third pillar is a multi-tool execution loop. The old Codex was limited to one tool at a time. Everything Mode runs Code Interpreter, Browser, Terminal, File System, Git, the GitHub API, Docker, and database clients in parallel to complete a single task. OpenAI built this on an internal framework called Atlas. Atlas tracks the state of multiple tools simultaneously and decides the next action.

The bigger picture

By 2026 the agentic coding market is settling into three camps.

| Camp | Flagship product | Positioning | Strengths | Weaknesses |

|---|---|---|---|---|

| Anthropic | Claude Code | Agentic first | Reliability, code quality | Weak IDE integration |

| OpenAI | Codex Everything Mode | Multi-tool + memory | Broader toolset, long-horizon memory | Unproven reliability |

| 3rd party | Cursor, Windsurf, Zed | IDE first | UX, speed | Model quality depends on vendor APIs |

If Codex Everything Mode delivers on its promises, it opens up the "days-long refactor" territory where Claude Code has run essentially unopposed. Early Hacker News reviews are mixed: positive takes like "handled 15 GitHub issues concurrently without context drift" alongside negatives like "computer-use is still slow and browser automation fails often."

Source: unsplash.com · Unsplash License

Source: unsplash.com · Unsplash License

The category is moving from "code generation" to "software operations." Everything Mode is the manifesto.

The pricing play is interesting too. OpenAI folded Codex Everything Mode into the existing GPT Pro $200/month tier and launched a separate Codex Max tier at $400/month with 10x the computer-use quota. Against Claude Code's $100/month Pro + API metering model, OpenAI is clearly going in on price to pull developers across.

So what actually changes

Three things for developers.

The unit of work changes. From "one prompt = one diff" to "one prompt = multi-day project." As this sticks, the developer's role shifts from code writer to agent manager. Claude Code started this transition; Everything Mode is near its peak.

OpenAI lock-in hardens. Project memory and User memory accumulate on OpenAI's side, and there is no way to migrate that memory to Claude Code or Cursor. OpenAI has historically had weaker developer lock-in than Anthropic or Google — Everything Mode fills that gap.

Computer-use reliability now dictates product quality. Browser automation is core to Everything Mode. When it fails, a days-long task collapses. Early reviews flag session stability as the top improvement axis — watch the next 90 days for how fast OpenAI ships fixes here.

For the capital and hardware side of the same shift, pair this with Amazon's $25B additional investment in Anthropic and the 5GW Trainium dedication. Hardware, capital, and the agent layer are all moving in sync at the frontier.

Further reading

출처

관련 기사

OpenAI's Codex Can Now Drive a Locked Mac — Autonomous Agents Step Toward 'Working While You're Away'

GPT-5.4 Deep Dive — The First General-Purpose Model That Actually Uses Your Computer

OpenAI Launches ChatGPT Pro at $100/Month — Is This the Real Answer to Claude Code?

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.