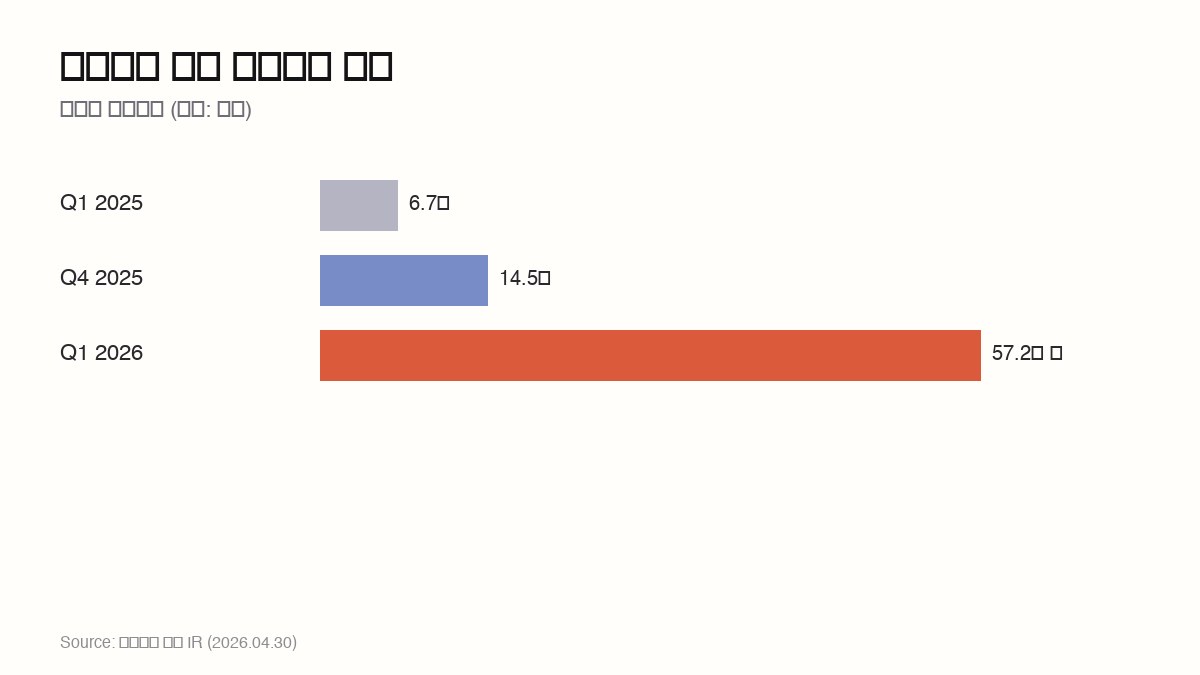

Samsung's Q1 — KRW 57.2T Operating Profit, Driven by an 8.5× Memory Surge

Samsung Electronics posted record KRW 57.2 trillion operating profit in Q1 2026, with the memory business 8.5× over the year-ago quarter. HBM alone outsold mobile and display combined. AI memory just rewrote the company's profit structure.

₩57.2T

Suwon set off fireworks. On April 30, 2026, Samsung Electronics reported KRW 57.2 trillion in Q1 operating profit — the highest quarter in company history. That's 4× the prior quarter (KRW 14.5T) and 8.5× the year-ago quarter (KRW 6.7T). Nine months earlier, the consensus narrative said Samsung had ceded the AI memory cycle to SK Hynix. Then HBM3E 12-high cleared NVIDIA qualification in September 2025, and the quarterly profit doubled, doubled again, and broke the all-time record. The company's profit mix flipped — from "TVs + phones + chips" to "AI memory + everything else."

The players — Samsung DS, NVIDIA, SK Hynix, Micron

Samsung DS (Device Solutions) bundles memory, system LSI, and foundry. Vice Chairman Jun Young-hyun runs it. After 2024-2025 saw revenue stagnate while SK Hynix held the HBM lead, the September 2025 HBM3E 12-high qualification on NVIDIA H200 and B200 platforms turned the tide. Q1 revenue at KRW 80T and operating profit at KRW 48T — the segment carried 84% of company-wide profit.

NVIDIA is the dominant buyer. Q1 NVIDIA HBM purchases hit roughly $29B; Samsung's share rose to 35%, closing in on SK Hynix's 40% from a 30/50 gap a year ago. NVIDIA's Blackwell B200 and Rubin designs use 8 HBM stacks per GPU, so HBM demand tracks GPU demand near 1:1.

SK Hynix held 50% HBM share entering 2026 but slipped to 40% in Q1. Vice Chairman Kwak Noh-jung said publicly on April 25 that "Samsung's catch-up is faster than expected." HBM4 mass production began at SK Hynix in April; Samsung joins in June with NVIDIA Rubin qualification.

Micron, the US memory company, holds ~25% share. CEO Sanjay Mehrotra is targeting first qualification on HBM4E (5th gen) and pushing the Boise, Idaho fab ramp on a 12-18 month delay.

Source: spoonai chart · Samsung official IR (April 30, 2026)

Source: spoonai chart · Samsung official IR (April 30, 2026)

The decomposition — KRW 57.2T

| Segment | Q1 2025 | Q4 2025 | Q1 2026 | YoY |

|---|---|---|---|---|

| DS (Semiconductor) | 1.9T | 5.6T | 48.0T | 25× |

| Memory | 1.5T | 5.0T | 45.0T | 30× |

| HBM only | 0.6T | 3.5T | 25.0T | 41× |

| MX (Mobile) | 3.4T | 5.5T | 5.5T | 1.6× |

| VD/DX | 0.7T | 2.0T | 2.0T | 2.9× |

| Harman | 0.3T | 0.7T | 0.8T | 2.7× |

| Display | 0.4T | 0.7T | 0.9T | 2.3× |

| Total | 6.7T | 14.5T | 57.2T | 8.5× |

The wedge is HBM standalone at KRW 25T — 44% of total profit and 2.7× the combined Mobile + VD/DX + Harman + Display profit (KRW 9.2T). One product line out-earned all other business units combined.

ASP dynamics drive part of this. HBM3E 12-high ASP hit ~$35/GB in Q1 2026, up 4.4× from $8/GB in Q1 2025. NVIDIA and AMD designs put 8 HBM stacks per GPU, and qualification on both 8-high and 12-high SKUs lifted average pricing in one step.

Samsung's January Q1 guidance was KRW 30-35T operating profit. Revised up to 45-50T in March. Final print exceeded that range too — meaning AI memory demand is accelerating faster than Samsung's own internal forecasts.

What each side gets — Samsung, NVIDIA, Korean economy

For Samsung, two outcomes simultaneously. First, the "close the HBM gap → overtake" trajectory shows up in numbers: SK Hynix down to 40%, Samsung up to 35%. With HBM4 ramp in June, a 50/50 balance or Samsung lead is plausible by Q4 2026. Second, capital recycling room. Of the KRW 48T DS segment profit, 30-40% can fund foundry R&D and the 2nm ramp, narrowing the TSMC gap.

For NVIDIA, supply diversification stabilizes. Single-vendor dependency was a structural risk; pushing Samsung to 35% reduces NVIDIA's exposure. Jensen Huang said at GTC in April that "HBM supply diversification is the gating factor on GPU ramp" — this earnings print is what he was watching for.

For the Korean economy, two channels: trade surplus expansion and GDP contribution. Q1 Korean semiconductor exports topped $150B for the first time, and Samsung's single-quarter operating profit equals roughly 1.7% of Korean GDP. AI memory cycle is now the largest single growth engine of the Korean economy.

Source: news.samsung.com · Samsung press kit

Source: news.samsung.com · Samsung press kit

Pattern matching — what worked, what didn't

2017-2018 memory super-cycle: Samsung hit KRW 14.4T operating profit in a single quarter on DRAM price spikes. The current cycle differs because HBM is a structural product category — less pricing volatility, more demand-driven economics.

TSMC 2020-2024 cycle: 5nm/3nm simultaneous ramps for iPhone and AI chip demand pushed operating margin past 50%. Samsung is replicating the curve in HBM but still lags TSMC in foundry margins.

DRAM crash, 2019: 60% price drop pulled Samsung's quarterly profit down to KRW 3.5T. AI memory could see similar volatility if GPU demand softens in 2027-2028 — keep this scenario in the modeling.

NAND deficit quarters, 2022-2023: heavy single-category dependence amplified company-level swings when one product flipped negative. Samsung diversifies through NAND, LPDDR, system LSI, and foundry — but HBM at 44% is still concentrated.

Counter-plays — SK Hynix, Micron, China

SK Hynix started HBM4 production in April. NVIDIA Rubin qualification opens to Samsung in June, so the 6-9 month overlap will be a direct ramp battle. Kwak's stated target is "first to mass-produce HBM4E (5th gen)" to reclaim the lead.

Micron's Boise HBM4 mass production lands in Q1 2027 — 12-18 months behind Korean rivals. NVIDIA's political pressure for US-domiciled supply may protect Micron's 25% share regardless.

Chinese players (YMTC for NAND, CXMT for DRAM) are 18-24 months behind on DRAM/HBM technology, with US export controls limiting EUV and high-bandwidth packaging tools. Near-term competition stays among the three Korean/US players, but watch Chinese HBM emergence in 2028-2029.

So what changes — for builders, founders, investors, end users

For builders, GPU and AI training cost should drop in 6-12 months. HBM ASP normalization could lower NVIDIA H200/B200 pricing or data-center rents, easing LLM training costs. Bigger models, longer contexts, and more inference calls become economically feasible in the next 12 months.

For founders, the takeaway is "AI infra cost falls → deeper applications get viable." AI application companies will raise capital faster than infra companies. The 100× ARR multiples on Sierra and Decagon are partially justified by this cost curve.

For investors, Korean semiconductor ETFs need to be re-rated. Samsung's PER expanded from 12× in 2025 to 18× in Q1 2026 and could push to 25-30× in the next 12 months. SK Hynix follows a similar trajectory.

For end users, AI service price cuts could begin late 2026 or Q1-Q2 2027. ChatGPT, Claude, Gemini per-token pricing has 30-50% downside room. Whether that flows through to consumers or is absorbed as company margin depends on competitive dynamics.

Stakes

- Wins: Jay Y. Lee (Chairman, Samsung) — "AI memory catch-up" thesis validated at record profit; Jun Young-hyun (DS Vice Chairman) — HBM3E 12-high qualification + HBM4 June ramp announced; Jensen Huang (NVIDIA CEO) — supply diversification secured for GPU ramp.

- Loses: SK Hynix (Kwak Noh-jung Vice Chairman) — HBM share down 5pp from 50% to 40%; Micron Boise — Korean ramp acceleration leaves only US politics as the lever; YMTC/CXMT — US export controls slow catch-up.

- Watching: TSMC (Mark Liu, C.C. Wei) — Samsung foundry R&D recapitalization could narrow the gap; AMD (Lisa Su) — HBM diversification choices for in-house GPU; Korean Ministry of Trade — how to leverage the "AI memory super-cycle" into national policy.

The skeptic's case — "Memory super-cycles are 18-24 months"

Christopher Rolland (Susquehanna analyst) and similar memory-cycle critics warn that AI memory super-cycles last at most 18-24 months. The 2017-2018 cycle saw a 60% price collapse after eight quarters; HBM could face the same volatility if GPU demand softens. Modeling Samsung profit at KRW 20T in 2027 alongside the headline 57T is responsible.

Tim Culpan (former Bloomberg columnist) and similar analysts highlight HBM4 ramp risk. 12-high and 16-high yield stabilization can take 3-4 quarters, and if pricing doesn't keep pace with ramp costs, operating margin could compress from 60% to 40%.

The skeptic case has two prongs: GPU demand softening and HBM4 yield/cost ramp risk. Both check at the next 6-12 months of guidance.

3-Line Summary

- Samsung Q1 operating profit hit KRW 57.2T — record, with semiconductor up 8.5× YoY.

- HBM alone at KRW 25T = 44% of total profit, more than mobile + VD combined.

- HBM4 ramp June, NVIDIA share at 35% closing on SK Hynix's 40%.

Further reading

출처

관련 기사

Micron Revenue Nearly Triples to $23.86B — AI Is Creating a Memory Supercycle

Micron Q2 2026 revenue hit $23.86B (3x YoY). HBM demand explosion, next-quarter $33.5B guidance, $25B capex. The AI memory supercycle explained.

ASML Raises 2026 Guidance to €40B After Q1 Beat — AI Is Rewriting the Chip Supply Chain

ASML posted Q1 2026 revenue of €8.77B and net income of €2.76B, beating estimates. Full-year guidance raised to €36-40B as memory chip sales surged to 51% of revenue, driven by Samsung and SK Hynix HBM expansion.

Tesla AI5 Chip Tapes Out – H100-Class Performance, TSMC+Samsung Dual Sourcing

Tesla completed tape-out of the AI5 chip. 192GB LPDDR5X, single-chip inference on par with Nvidia's H100. Dual-sourced at TSMC Arizona and Samsung Taylor – a US-only production strategy. Musk claims it'll be 'the most-produced chip in history.'

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.