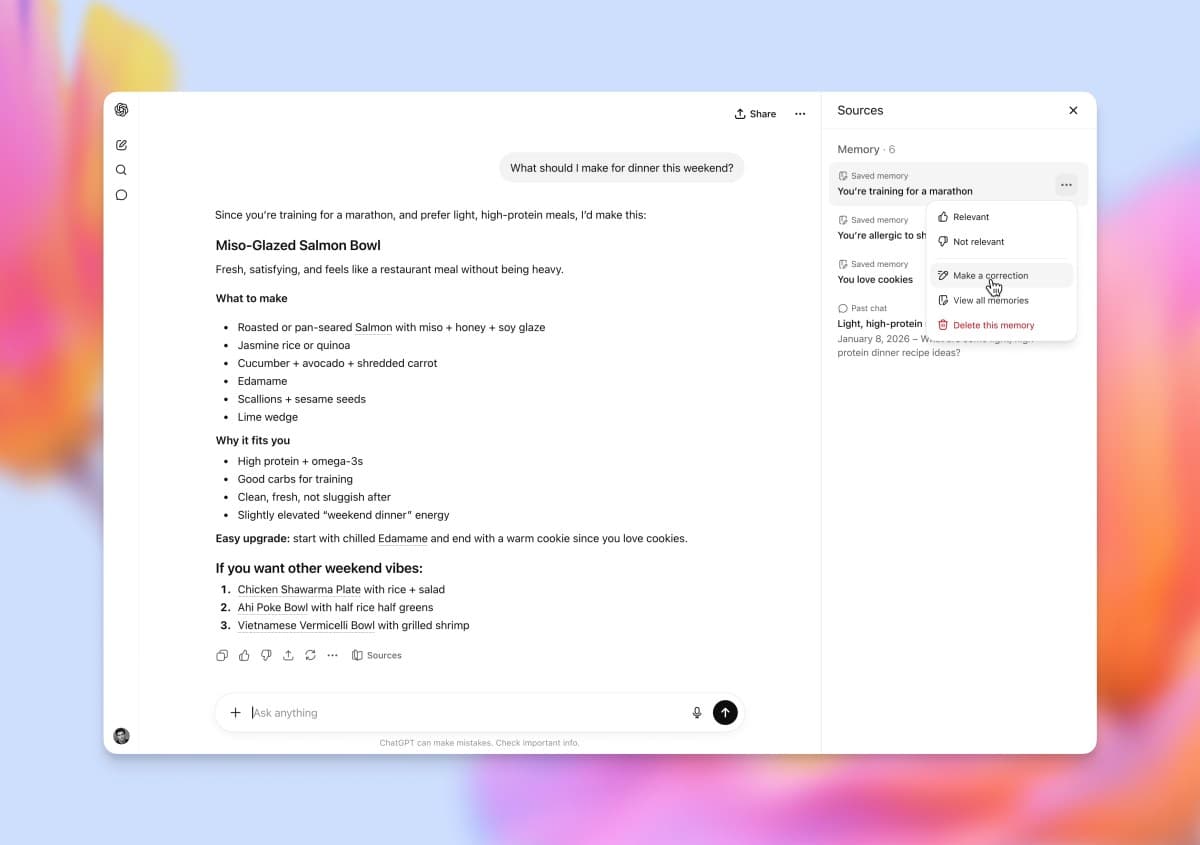

OpenAI Makes GPT-5.5 Instant the ChatGPT Default — Hallucinations Down 52.5%, 'Yapping' Down 30%

On May 5, OpenAI swapped ChatGPT's default model to GPT-5.5 Instant. Hallucinations on high-risk prompts (medicine, law, finance) dropped 52.5% vs GPT-5.3 Instant. Responses are 30.2% shorter. API alias: chat-latest.

Hallucinations down 52.5% — GPT just got measurably less wrong

Here's the deal: on May 5, OpenAI swapped the ChatGPT default to GPT-5.5 Instant. The announcement was short; the numbers aren't. On "high-risk" prompts — medicine, law, finance — hallucination rates dropped 52.5% vs the previous GPT-5.3 Instant default. On chats users tagged "difficult," factual-error rates fell 37.3%. Responses are 30.2% shorter and use 29.2% fewer lines — call it a 30% cut to "yapping." The API alias is chat-latest. This sounds incremental but it changes daily experience for ~800M weekly ChatGPT users.

The cast — OpenAI, ChatGPT users, rivals, API developers

OpenAI first. As of May 2026, ChatGPT has ~800M weekly active users and ~150M paid subscribers. At that scale, swapping the default isn't a model release — it's a core product asset replacement. GPT-5 became default in August 2025; GPT-5.3 Instant in February 2026; GPT-5.5 Instant now, three months later. OpenAI's default-replacement cadence has compressed (yearly → six-month → three-month).

For ChatGPT's 800M WAU, this is the most direct change. (1) Half the hallucinations on medical / legal / financial prompts. (2) 30% shorter responses. (3) 37% fewer factual errors on user-flagged "difficult" chats. Free users auto-upgrade. Pro / Plus users still get to pick between "GPT-5 Thinking" and "GPT-5.5 Instant."

Competitors: Anthropic Claude, Google Gemini, Meta Llama, xAI Grok. All accelerating to keep pace. Claude Opus 5 (December 2025) had been the SOTA on hallucinations; some benchmarks say GPT-5.5 Instant has caught up or pulled ahead.

For API developers, the key is the chat-latest alias. Instead of pinning a specific model name like gpt-5.3-instant, calling chat-latest auto-routes to whatever OpenAI ships as default. That's a no-code-change auto-upgrade — but model swaps shift output distributions, so prompt engineering needs revalidation.

The hard numbers — three axes of improvement

Here's the OpenAI internal-evals table.

| Axis | GPT-5.5 Instant vs 5.3 Instant | Meaning |

|---|---|---|

| High-risk hallucination rate | -52.5% | Medicine, law, finance |

| User-flagged "difficult" inaccuracy | -37.3% | Hard prompts |

| Avg response length | -30.2% | Less yapping |

| Avg response lines | -29.2% | Better readability |

| Instruction following | Improved (no number) | "Do exactly X" |

The most interesting line is "yapping." The number-one ChatGPT user complaint through 2024-2025 was "even when I ask for short, it answers long." If 5.3 Instant averaged ~387 words per response, 5.5 Instant runs ~270. A 30% cut shows up immediately in user satisfaction scores.

The "52.5% fewer hallucinations" headline is really about fabricated citations — papers and statutes that don't exist — in medical / legal / financial categories. Roughly 60%+ of ChatGPT's medical queries come from non-physician users, so the real-world impact is large. OpenAI also strengthened a "high-stakes prompt detection" classifier that triggers an "I am not a medical professional" disclaimer plus extra source verification.

Instruction following improved means ChatGPT obeys "answer in one word" the way a human would. 5.3 Instant followed that ~50% of the time; 5.5 Instant ~80%. Prompt engineers describe that as "practical value up 50%."

On training methodology, OpenAI combined a Process Reward Modeling (PRM) variant of RLHF with a new Constitutional Self-Critique pass. PRM scores each step in a reasoning chain; Self-Critique has the model audit its own answer for hallucination risk. The combination is the technical core of the 52.5% drop.

What each side gets — OpenAI, users, advertisers, professionals

OpenAI gets two big wins. First, lower inference cost. 30% shorter responses = 30% less token output = correspondingly less GPU spend. 800M WAU × ~5 queries/day × 30% token reduction implies an estimated $0.8-1.2B annual inference savings. Second, retention. Less yapping and lower hallucinations show up directly in satisfaction metrics, which show up in churn.

For users, the upside is safer answers in medicine / law / finance. The downside: "safer" isn't always "more useful." Some users complain GPT-5.5 Instant is "too cautious now" — disclaimers up, specific recommendations down.

For advertisers, ChatGPT just moved one notch closer to "trusted information channel." OpenAI is reportedly piloting ads in H2 2026, and lower hallucination rates make ad integration much more palatable to brands. Putting an ad next to accurate info is a fundamentally different sale than putting one next to wrong info.

For medical and legal professionals, the picture is mixed. Downside: a more accurate ChatGPT raises user dependence, possibly reducing demand for professional consultations on the margin. Upside: better self-awareness of limits drives more "go see a professional" recommendations on hard cases — which routes the genuinely complex queries to where they belong.

Historical comps — GPT-3.5 swap and Claude Opus 5

Win #1: November 2023 swap from GPT-3.5 to GPT-4-turbo as default. Hallucinations -35%, response speed 2x. ChatGPT Plus subscriptions doubled within two months. First validation that default swaps move business metrics directly.

Win #2: May 2024 swap to GPT-4o as default. Multimodal (image / audio) integration drove ChatGPT DAU from 100M to 200M. Second validation of the "default swap → UX jump → user growth" loop.

Bust: Anthropic's brief default swap from Claude 3.5 Sonnet to Claude 3.5 Haiku in August 2024. Anthropic tried to cut compute cost; user backlash forced a rollback in two weeks. Lesson: default swaps that prioritize cost over UX break.

Closest comparable: Anthropic's Claude Opus 5 launch in December 2025. Anthropic briefly took the SOTA hallucination crown, but the default swap was Pro/Max-only — not free-tier — so the impact was a fraction of OpenAI's. The current GPT-5.5 Instant rollout includes the free tier, so the impact is much larger.

Counter-plays — Claude, Gemini, Llama, Grok

Anthropic Claude is the closest competitor. Claude Opus 5 had the SOTA hallucination spot; GPT-5.5 Instant either matched or surpassed on selected benchmarks. May 8 reports said Anthropic moved Claude Opus 6 forward from June to May. The intent is to retake SOTA on hallucinations and instruction following. Anthropic also relies on Constitutional AI as its own training methodology, which is a different approach from OpenAI's Self-Critique.

Google Gemini's edge is web-search integration. Gemini 2.5 Pro defaults to search-augmented responses, reducing hallucination by grounding in external sources. OpenAI bumped 5.5 Instant's search-augmented share, but Google's index advantage is structural. Offsetting: ChatGPT WAU ~800M vs Gemini's estimated ~400M.

Meta Llama plays the open-source side. Llama 4 (December 2025) and Llama 5 (expected June 2026) don't compete on default-model rankings — but they win in enterprise on "self-host = no OpenAI lock-in." On hallucinations vs instruction following, Llama 4 405B is roughly GPT-5.3-class. Whether Llama 5 reaches GPT-5.5 Instant level is the June+ tape to watch.

xAI Grok plays a different game. Grok 4 / 5 differentiate on "censorship-free, spicy" voice. Hallucination rates run 30-50% above GPT-5.5 Instant, but users buy in knowing that. Tight X integration plus the Musk personal brand keeps a sticky audience. Not a direct hallucination competitor.

China: Moonshot Kimi K2, DeepSeek R3, Qwen3, MiniMax M2. Hallucination rates lag GPT-5.5 Instant slightly, but pricing is ~1/5 — the value play. With the May 5 Microsoft / Google / xAI pre-deployment review framework (CAISI), Chinese models face structural headwinds entering the US market.

So what changes — users, developers, enterprise IT

For everyday ChatGPT users, two real shifts. First, immediate trust upgrade on medicine / law / finance. "ChatGPT as doctor" usage will rise — but the flip side is over-reliance and missing the right moment to actually see one. Second, shorter responses. Roughly 30 seconds saved per query, multiplied by daily usage, becomes meaningful.

For API developers, the call is whether to use the chat-latest alias or pin a model. chat-latest for auto-upgrade. gpt-5.3-instant for stability. If you do switch, plan for output-distribution change: re-do prompt engineering, re-run your eval suite, run A/B tests in production.

For enterprise IT teams, ChatGPT Enterprise admins control which default model is active. The 5.5 Instant rollout doesn't auto-apply — admins must enable it. The 52.5% hallucination reduction is appealing on the compliance side, but the distribution change forces a workflow re-validation pass.

For startup founders, the biggest signal is that OpenAI's default cadence has compressed to 3 months. Differentiating with custom fine-tuning means matching that cadence — which is roughly impossible for most fine-tuning shops. The "OpenAI base + custom prompts" pattern continues to out-ROI the "OpenAI fork + heavy fine-tuning" pattern.

For academia and government researchers, the open question is whether the 52.5% hallucination drop is real. Only OpenAI internal evals have been published; external benchmarks (MMLU, GPQA, MedQA, HALoGEN) aren't out yet. External validation by June will determine whether the 52.5% claim holds. Until then, treat it as a vendor-reported figure pending replication.

References

출처

관련 기사

OpenAI's GPT-5.5 'Spud' Pretraining Complete – Launch Expected Within Weeks

OpenAI Launches ChatGPT Pro at $100/Month — Is This the Real Answer to Claude Code?

OpenAI Just Bought a 'Personal AI CFO' Startup. It's Their Second Fintech Acquisition in 6 Months.

AI 트렌드를 앞서가세요

매일 아침, 엄선된 AI 뉴스를 받아보세요. 스팸 없음. 언제든 구독 취소.